Introduction

Introduction to GPU programming

Overview

1. What is a GPU

2. GPU hardware

3. Programming GPUs

4. Software - hardware mapping

Part 1: What is a GPU?

What is a GPU?

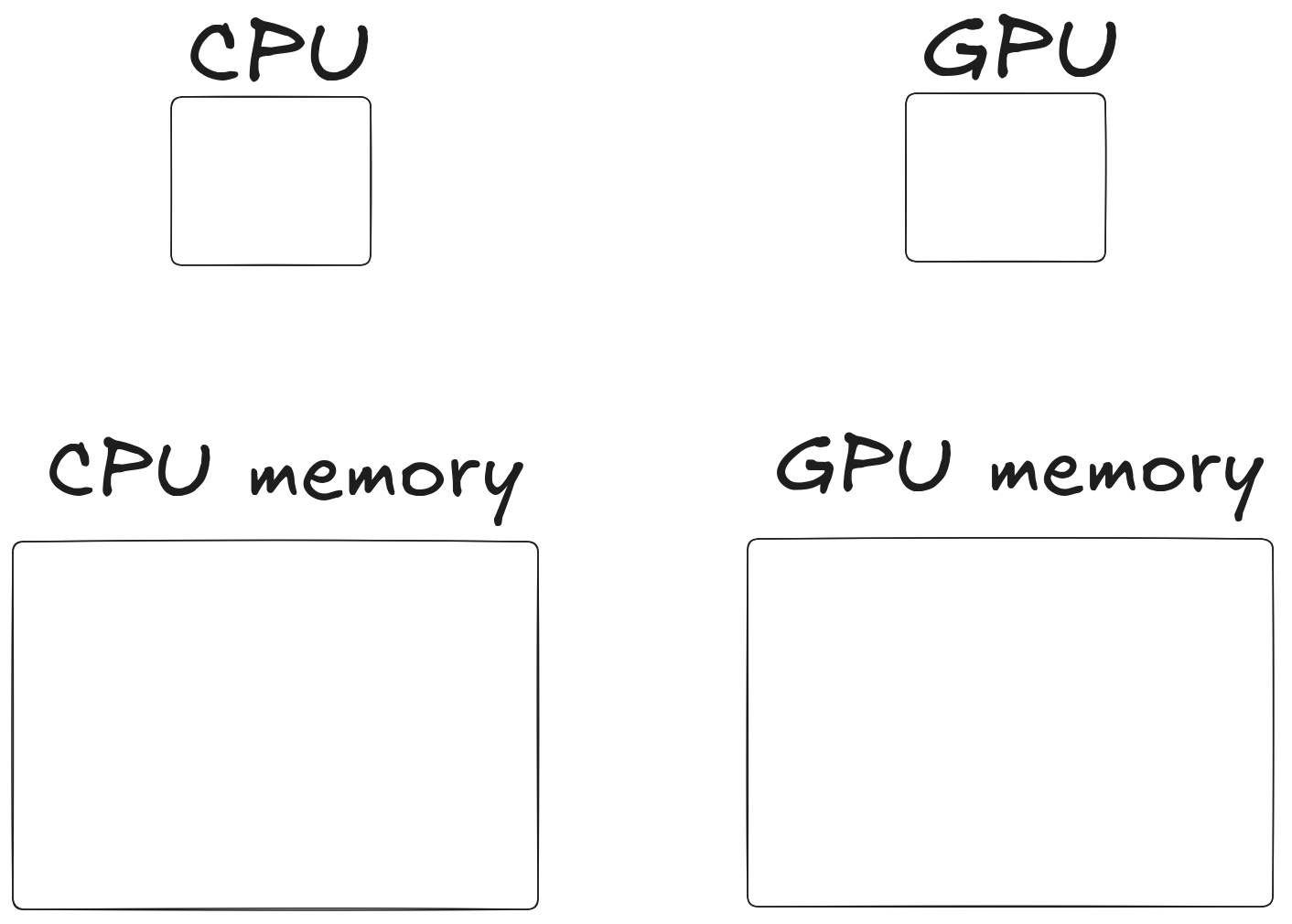

GPU is a processor with a dedicated memory area

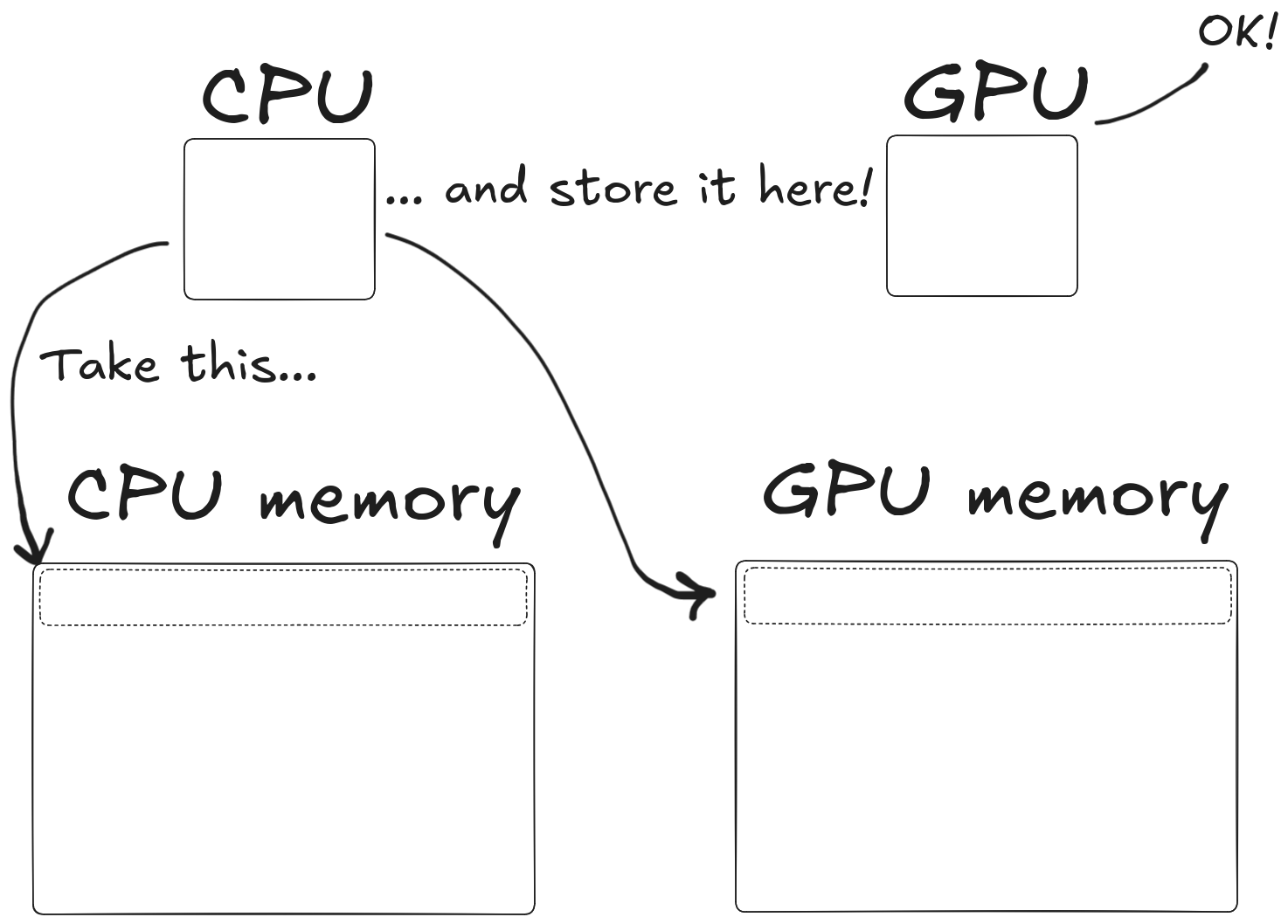

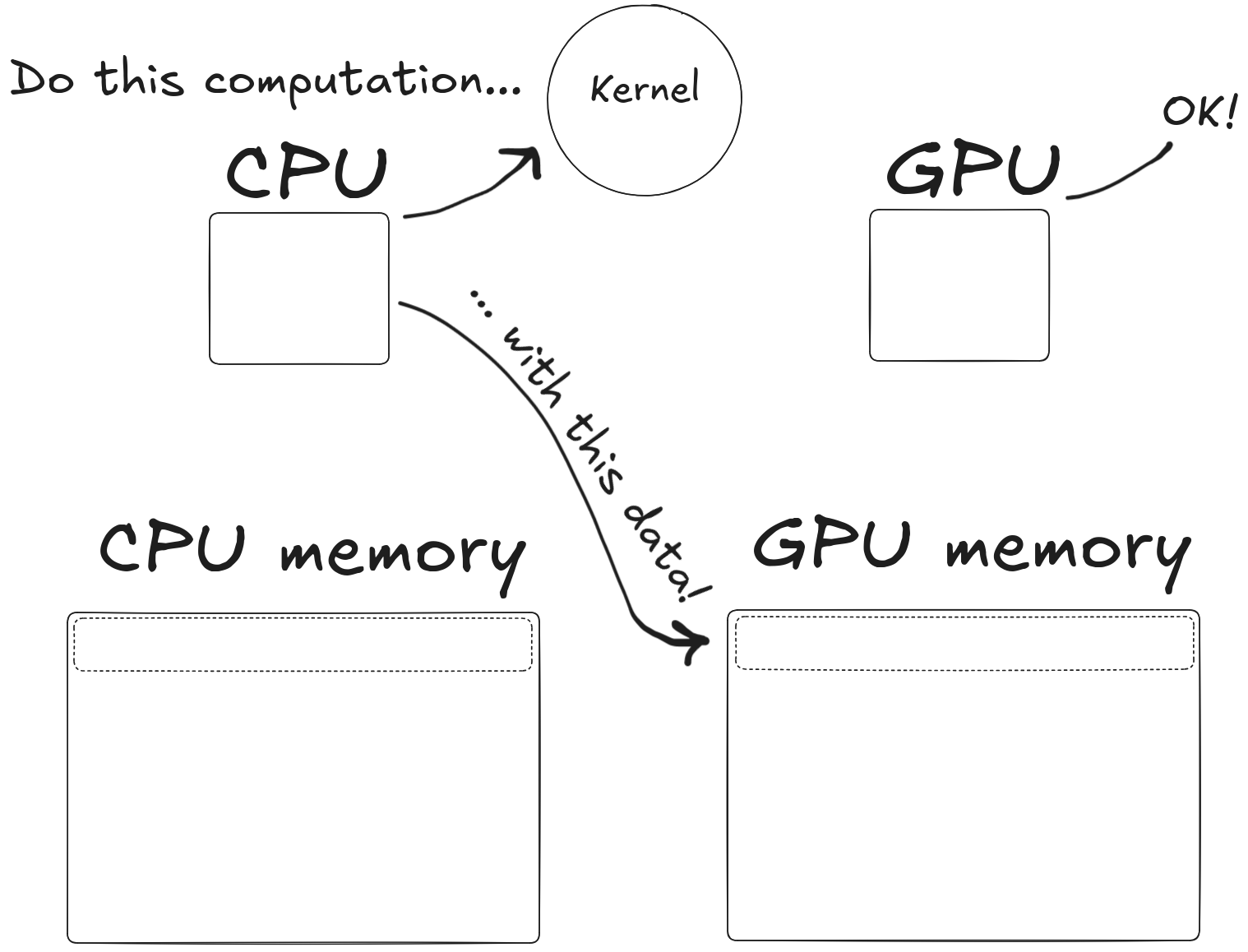

How do I use the GPU?

To use it, you have to

- Copy memory from CPU to GPU

How do I use the GPU?

To use it, you have to

- Copy memory from CPU to GPU

- Tell the GPU what to do with that data

How do I use the GPU?

To use it, you have to

- Copy memory from CPU to GPU

- Tell the GPU what to do with that data

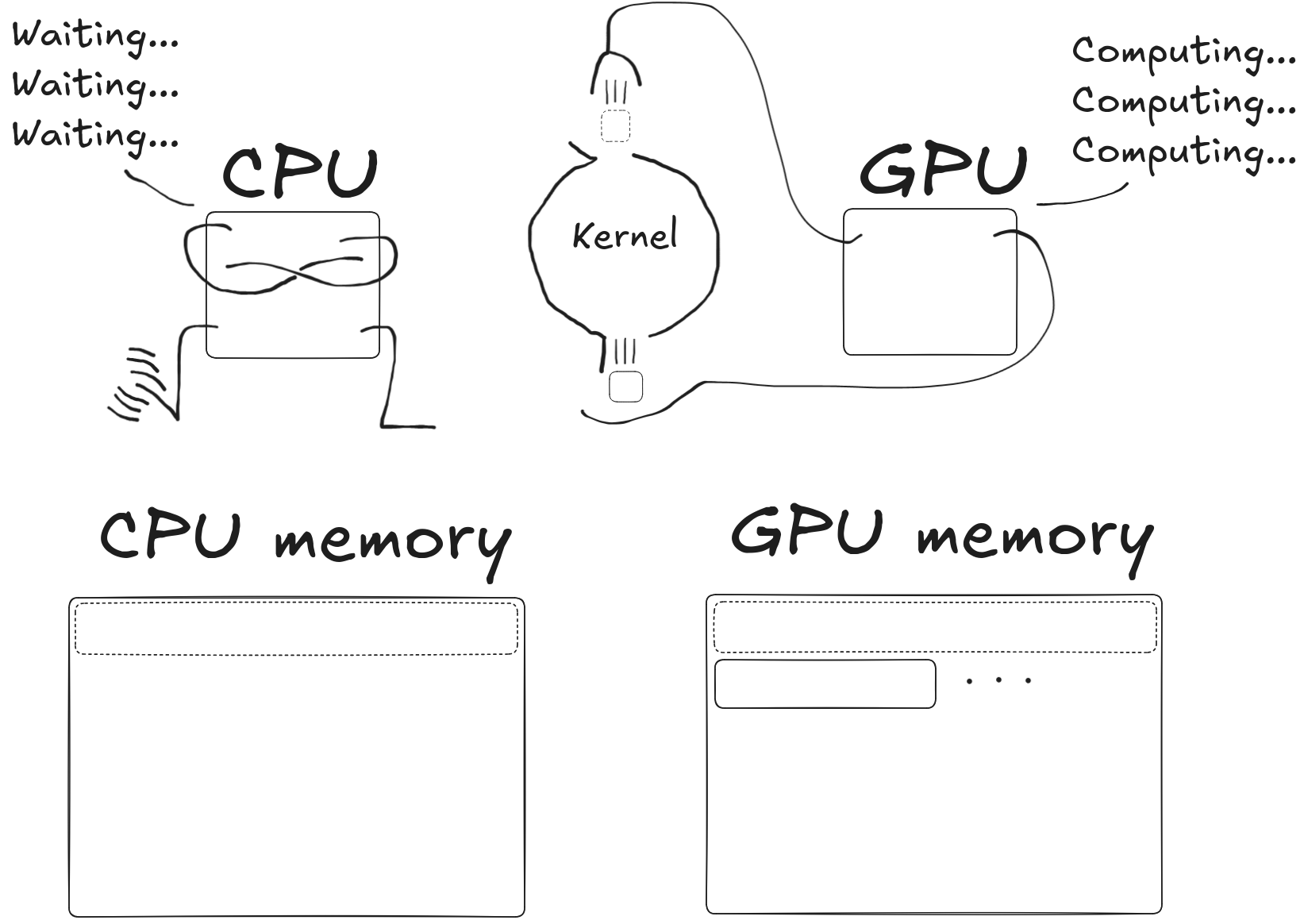

- Wait for the GPU to finish doing what you told it to do

How do I use the GPU?

To use it, you have to

- Copy memory from CPU to GPU

- Tell the GPU what to do with that data

- Wait for the GPU to finish doing what you told it to do

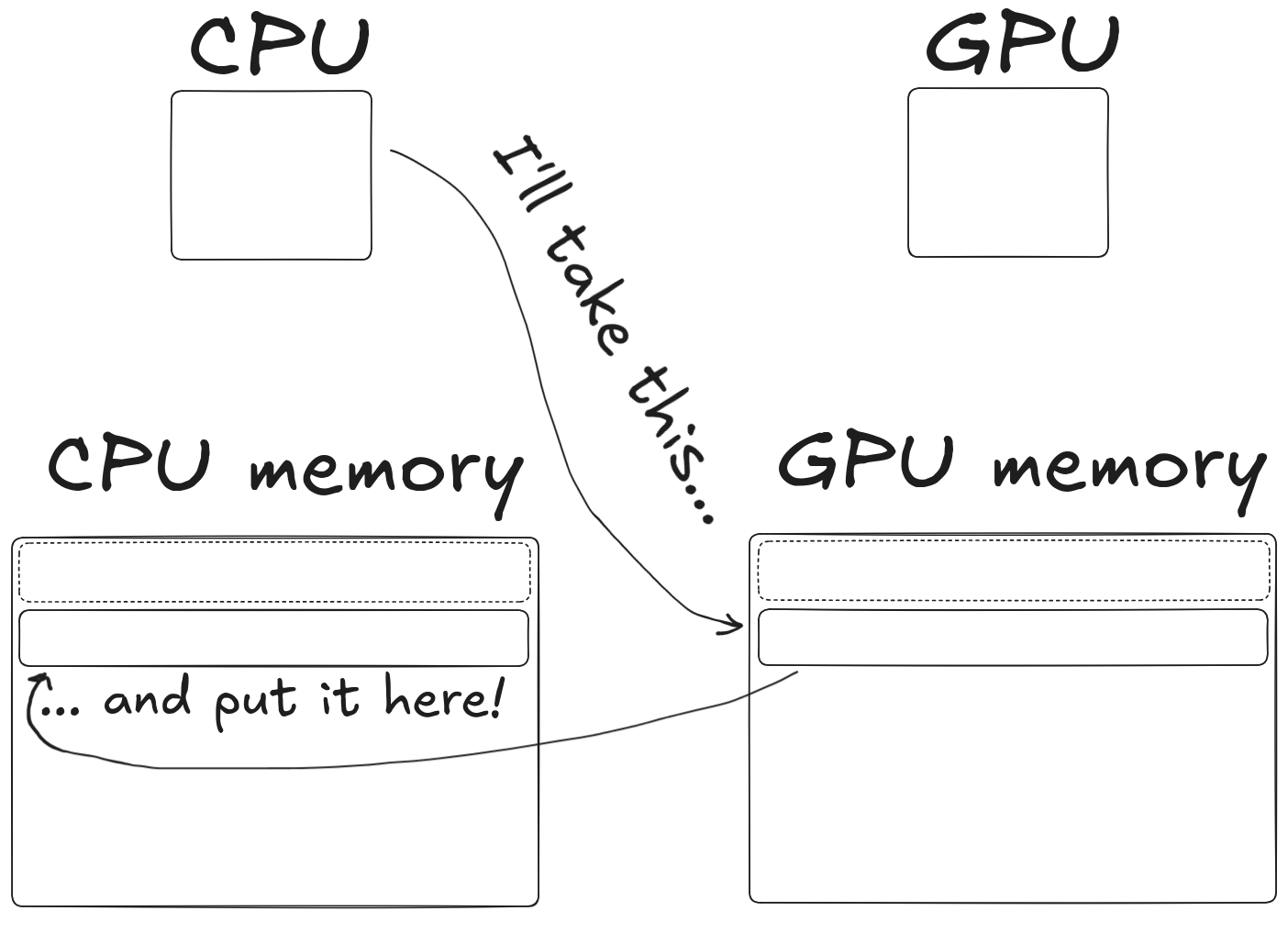

- Copy memory from GPU back to the CPU

Why?

But why?

Why move data back and forth from CPU to GPU to CPU?

What’s the benefit?

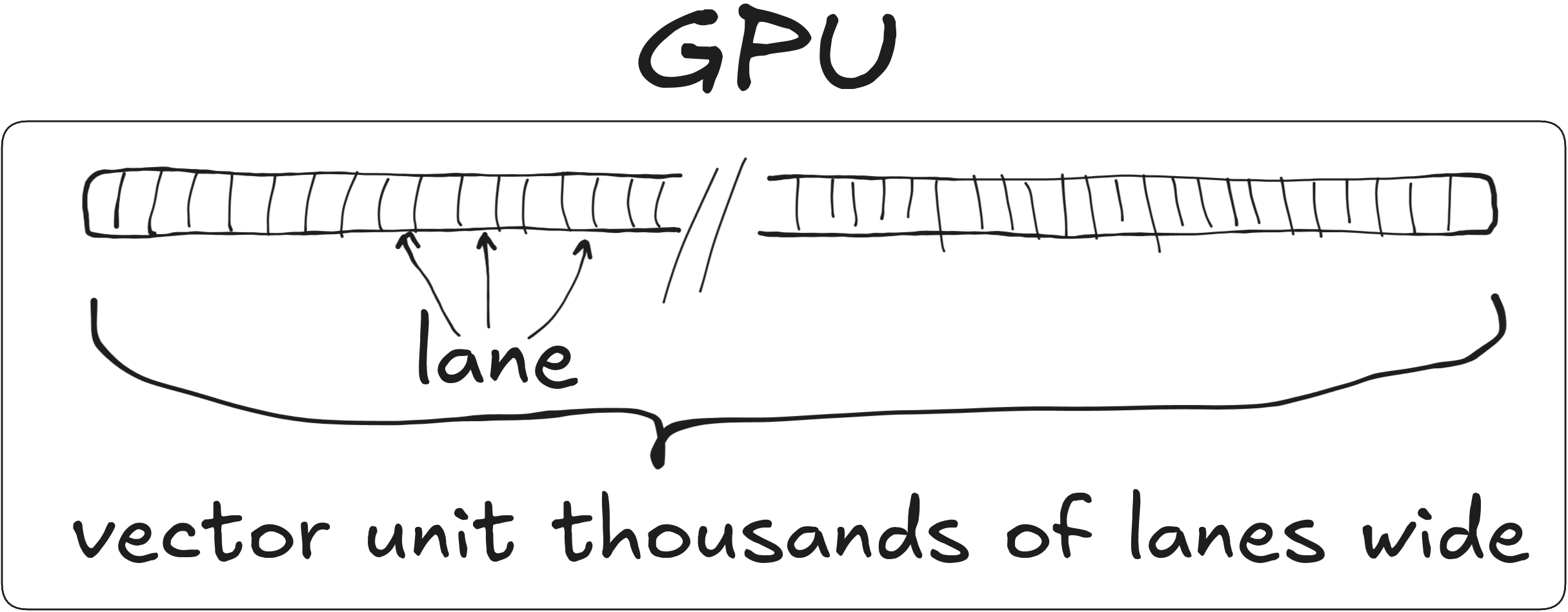

GPU as a wide SIMD unit

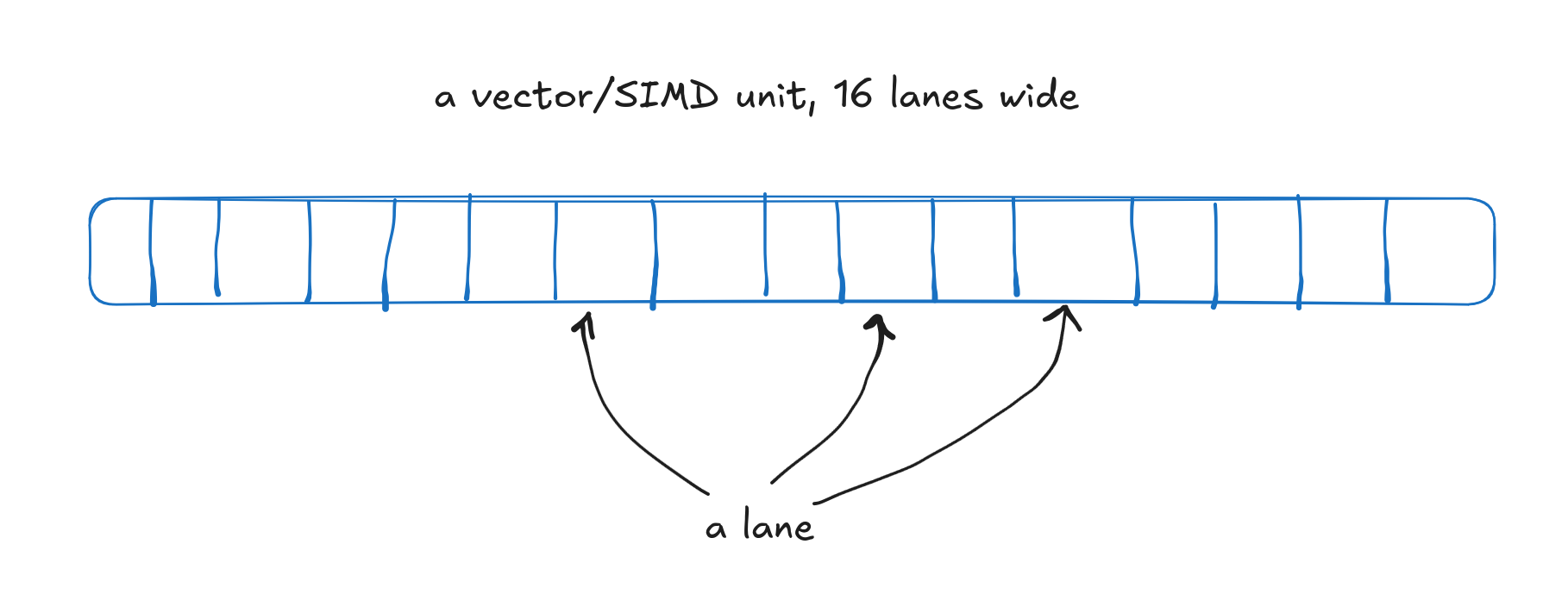

SIMD

- SIMD = Single Instruction, Multiple Data

- Same exact instruction (e.g. “integer add”) to multiple pieces of data

- Throughput: Width of unit \(\times\) throughput of scalar unit

- CPUs & GPUs both use SIMD

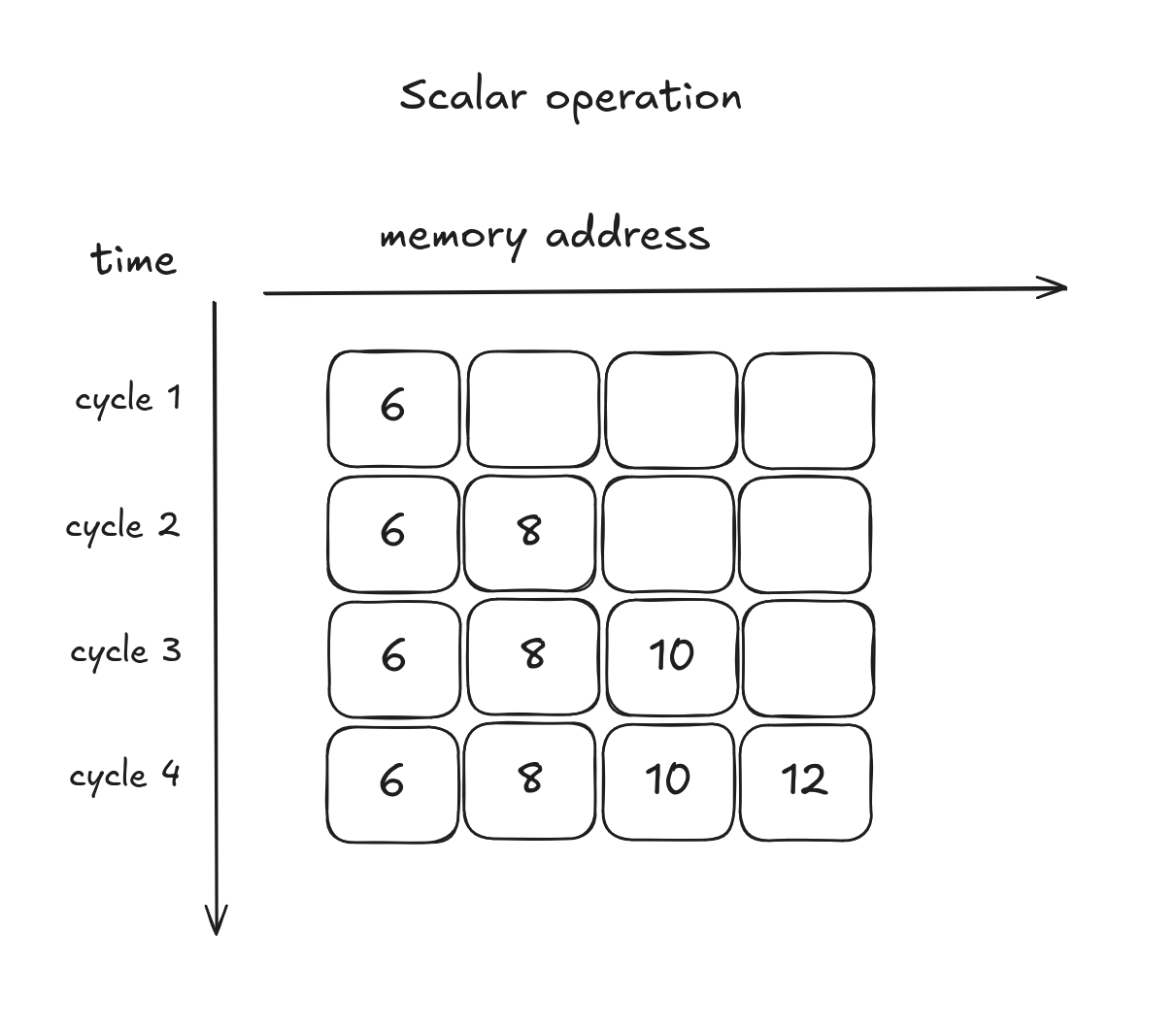

Scalar addition

Element-wise add for arrays a and b

resulting in array c

4 cycles, 4 elements: throughput = 1

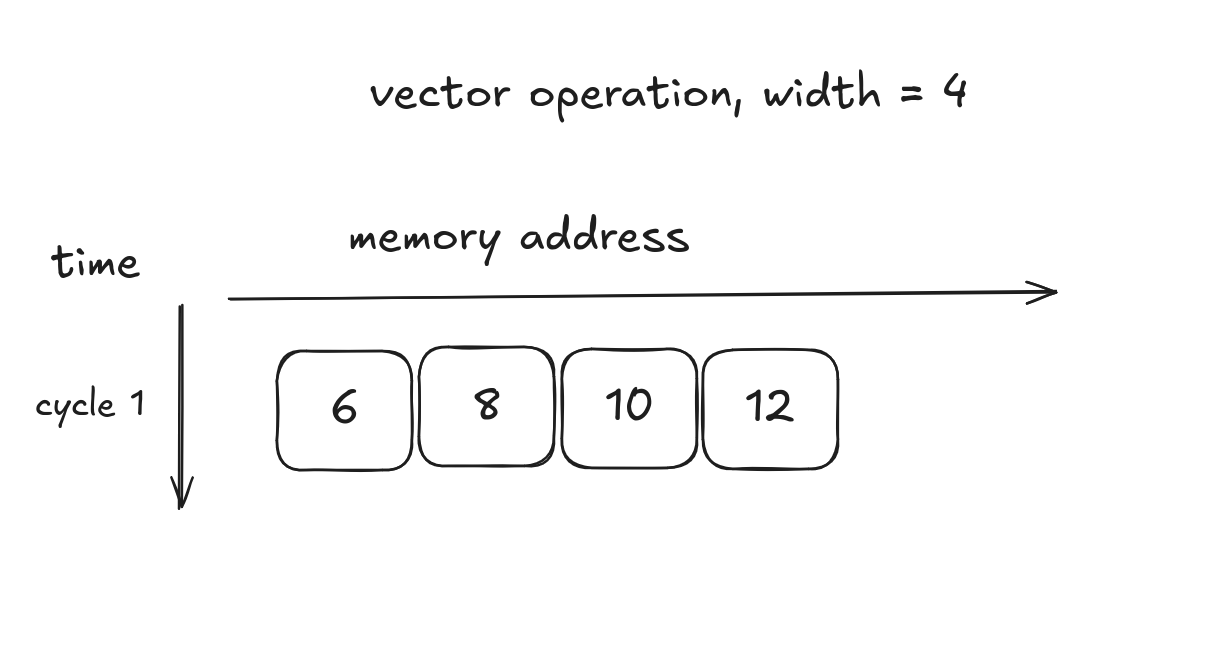

SIMD addition

Element-wise add for arrays a and b

resulting in array c

1 cycle, 4 elements: throughput = 4

Which is faster, CPU or GPU?

It takes time to

- Move data to the GPU

- Compute on the GPU

- Move results to the CPU

So is it faster to use the CPU or the GPU?

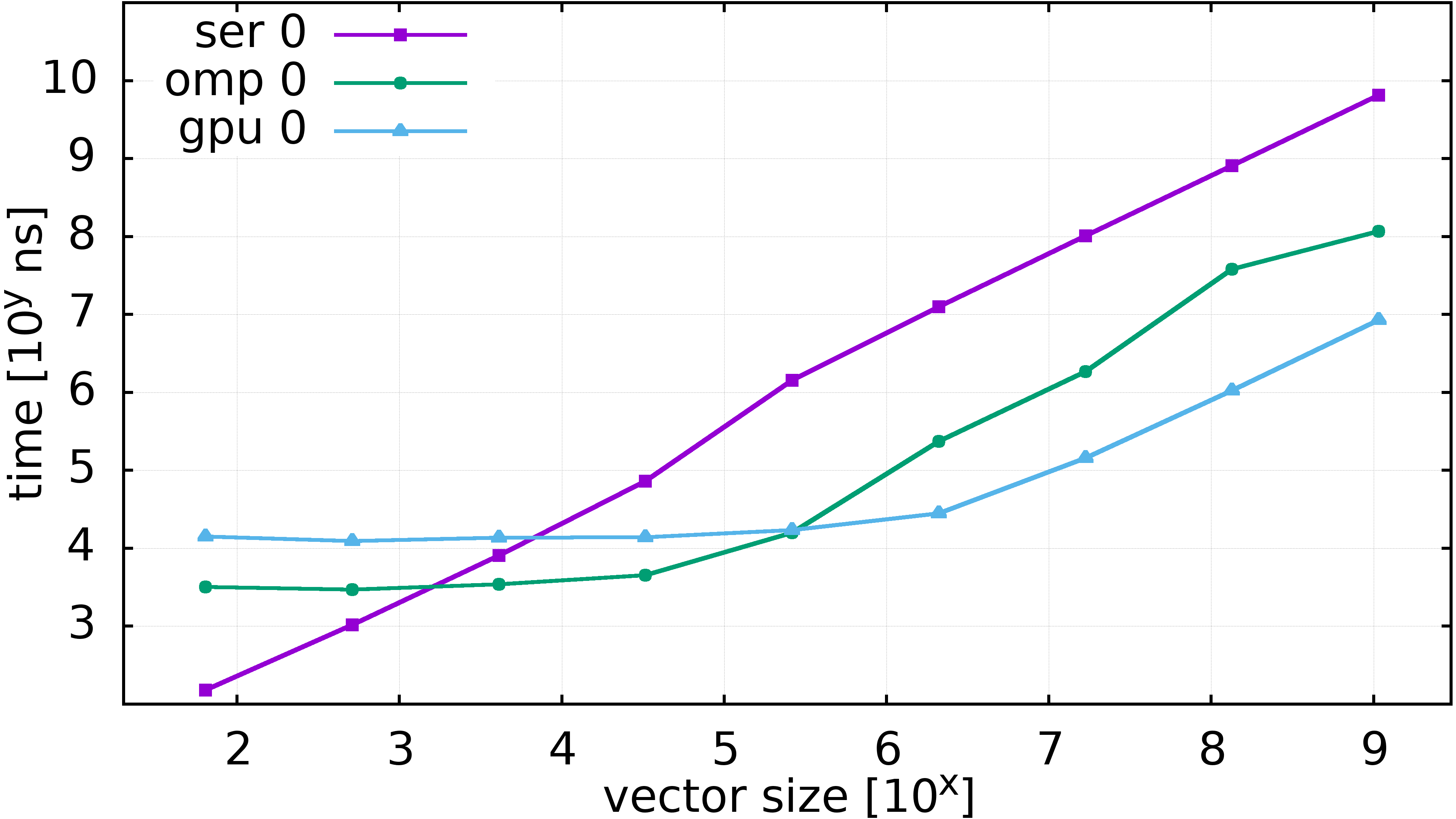

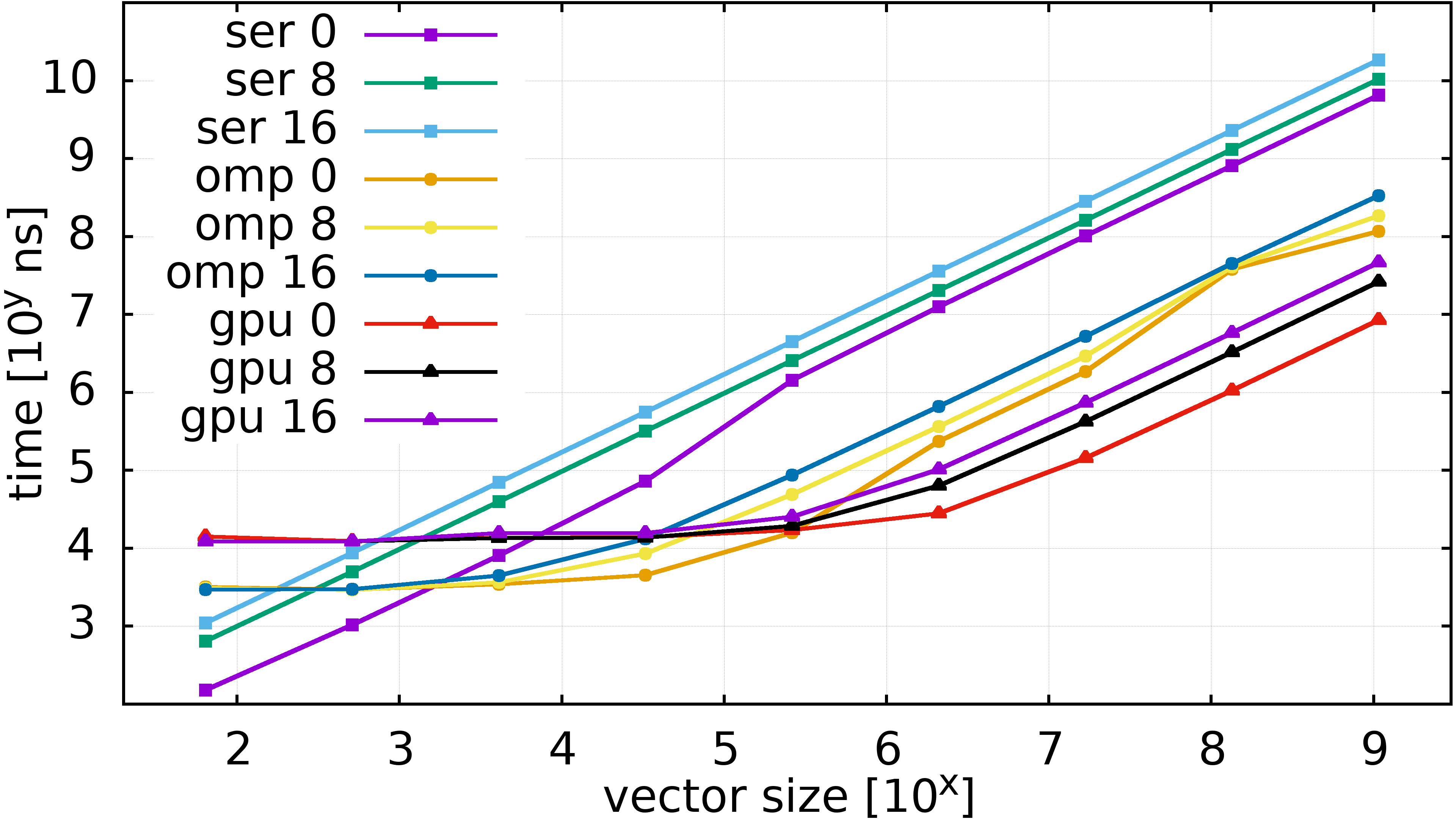

Runtimes of Taylor expansion, little computation

- \(y_i \gets \sum_{n = 0}^{0} \frac{x_i^n}{n!}\)

- \(i = 1\dots\) vector size

- Only 1 term (“\(x^0\)”) but do the arithmetic

- Init \((x_1,\dots)\) on device (GPU)

- Starting always on CPU memory would make CPU faster!

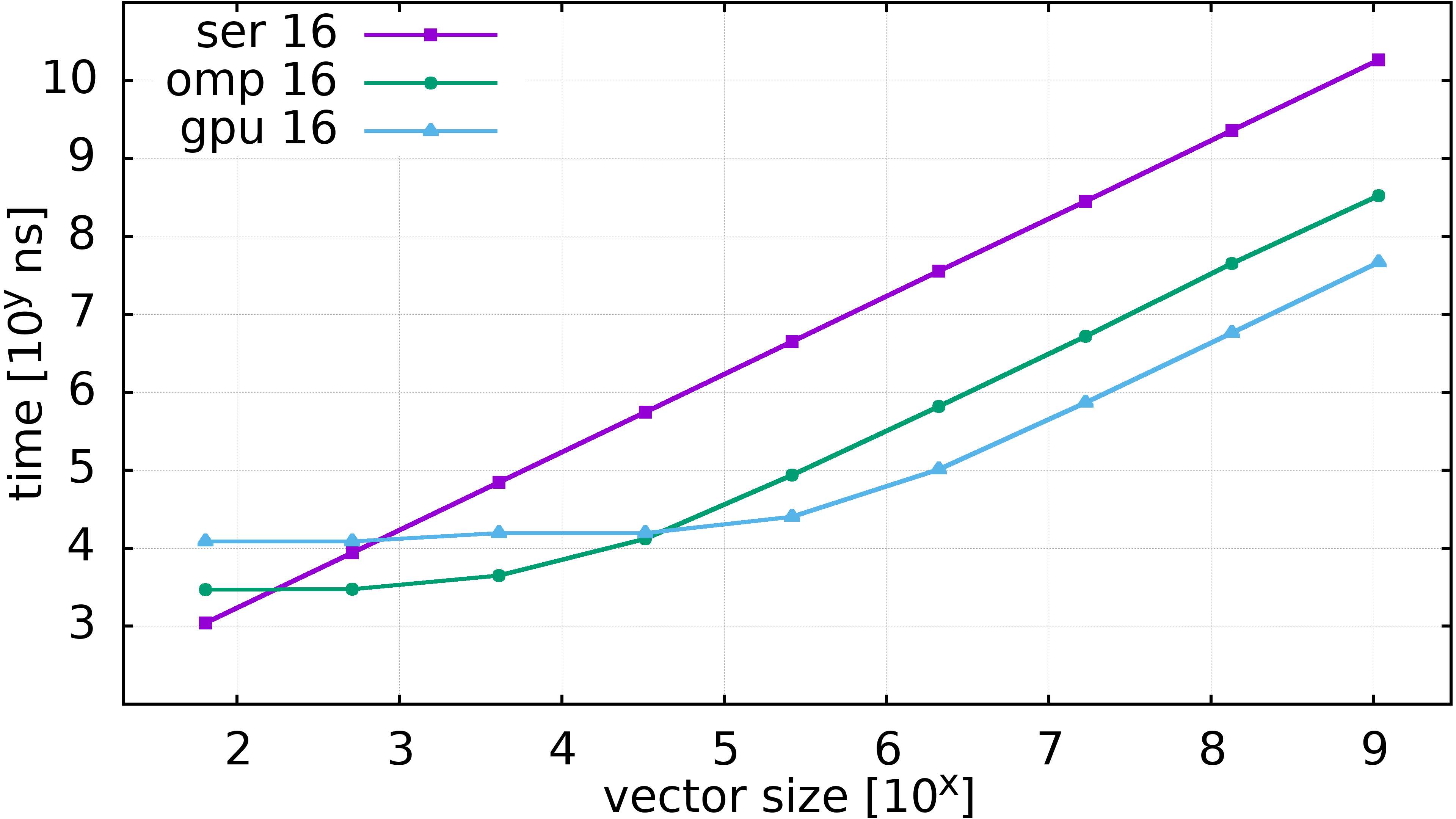

Runtimes of Taylor expansion, more computation

- \(y_i \gets \sum_{n = 0}^{16} \frac{x_i^n}{n!}\)

- Compute units are not mostly waiting for data

Runtimes of Taylor expansion, \(N=0,8,16\)

Recap

- massively parallel processor

- own memory space –> requires data movement

- performs instructions to multiple pieces of data at the same time

- useful when you have a lot of data

Part 2: Model of GPU Hardware

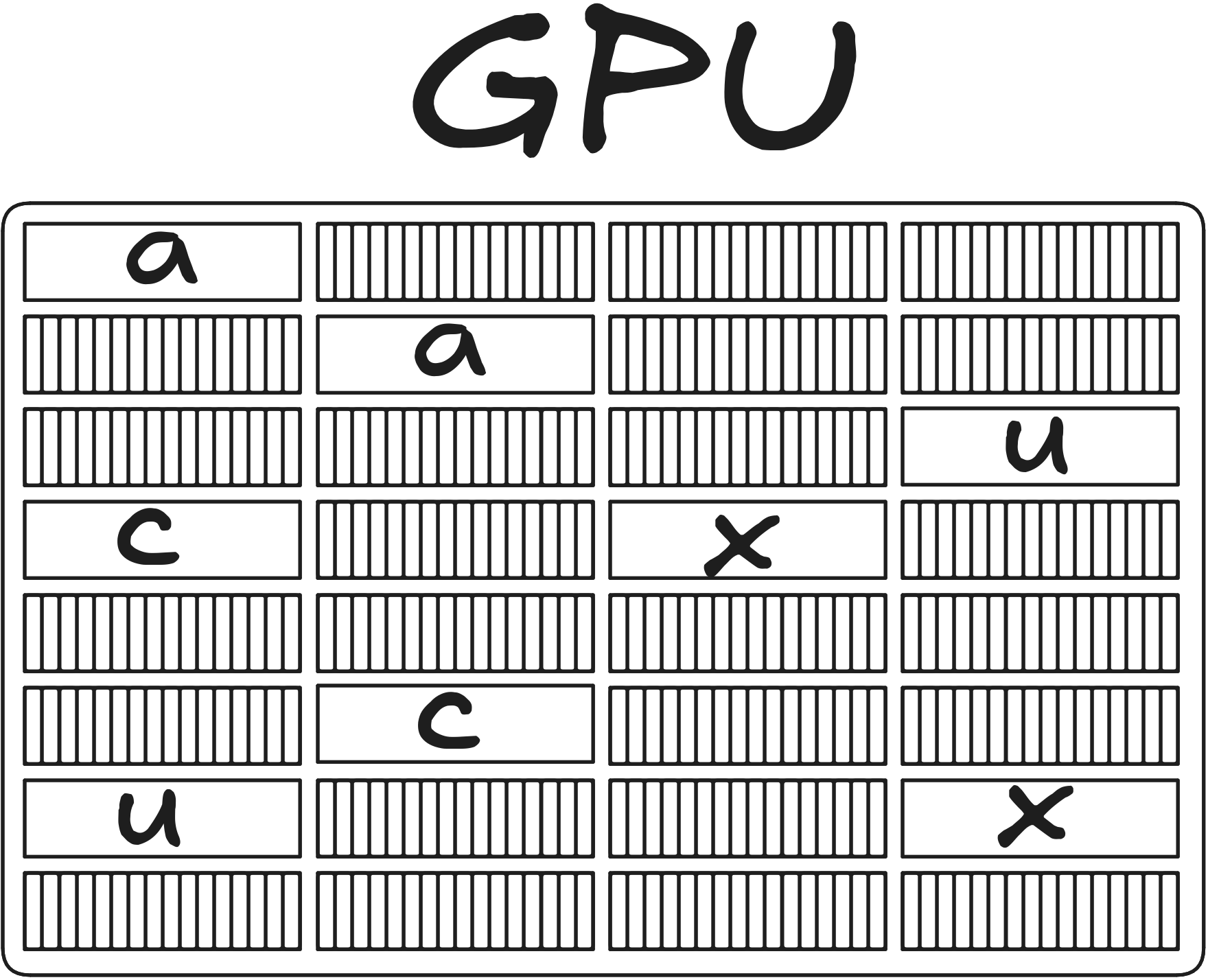

GPU as a wide SIMD unit

32 operations

1024 lanes

Utilization: \[ 32 / 1024 = 1 / 32 \approx 3\% \]

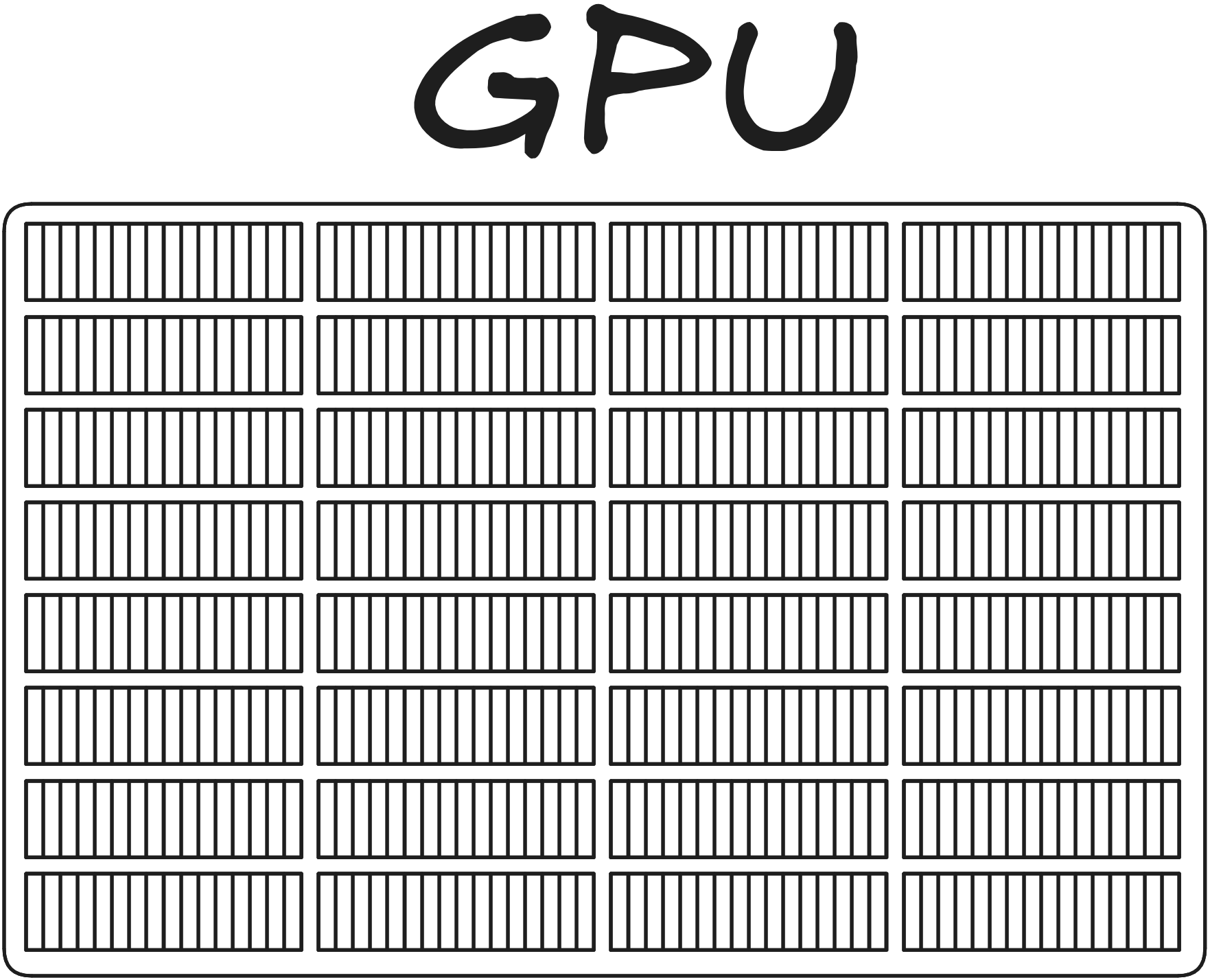

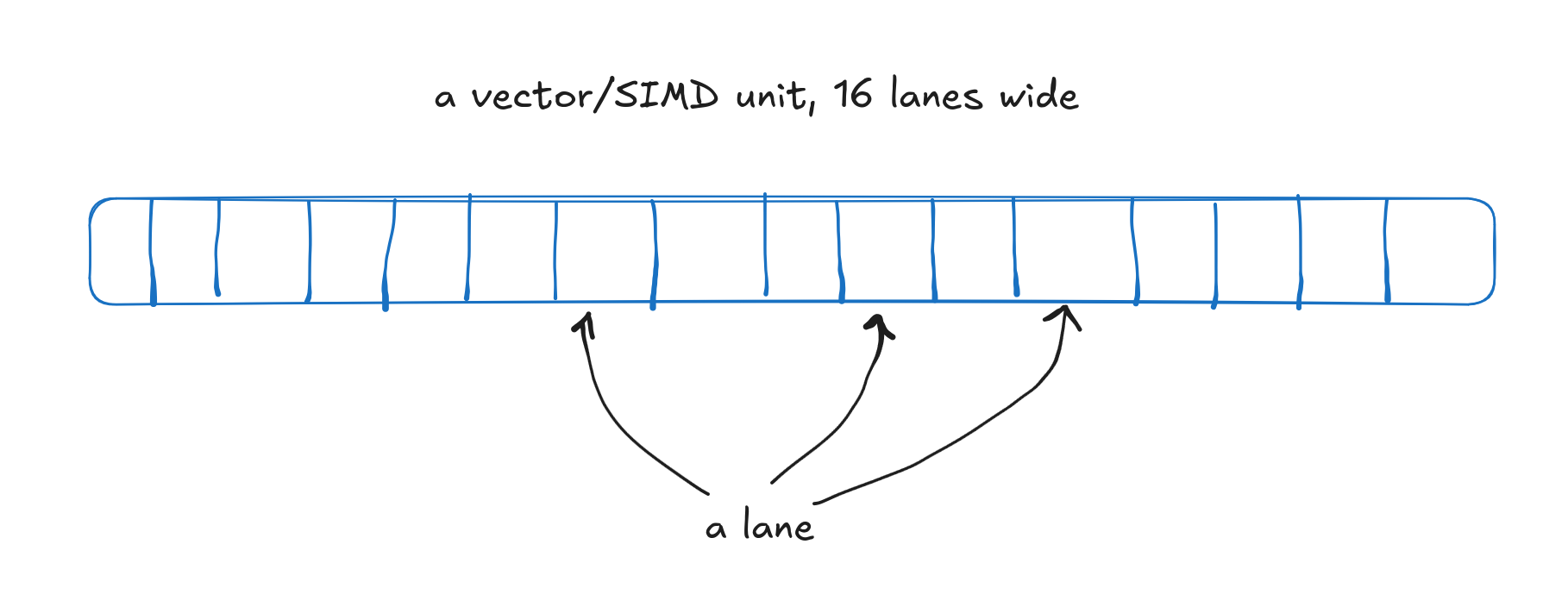

GPU as a collection of independents vector units

32 vector units

A vector unit, 16 lanes wide

GPU as a collection of independents vector units

32 vector units, executing different instructions

A vector unit, 16 lanes wide

Who controls the vector units?

Hand written SIMD for CPUs

// Multiply 8 floats by another 8 floats

// on the CPU, using SIMD.

template<int offsetRegs>

inline __m256 mul8(const float* p1, const float* p2)

{

constexpr int lanes = offsetRegs * 8;

const __m256 a = _mm256_loadu_ps(p1 + lanes);

const __m256 b = _mm256_loadu_ps(p2 + lanes);

return _mm256_mul_ps(a, b);

}- Could do the same on the GPU using (hardware vendor specific) assembly-like language

- More common to use higher level APIs like Cuda/HIP/Sycl

- SIMT = Single Instruction, Multiple Threads

Who controls the vector units?

Is this realistic?

Who controls the vector units?

No

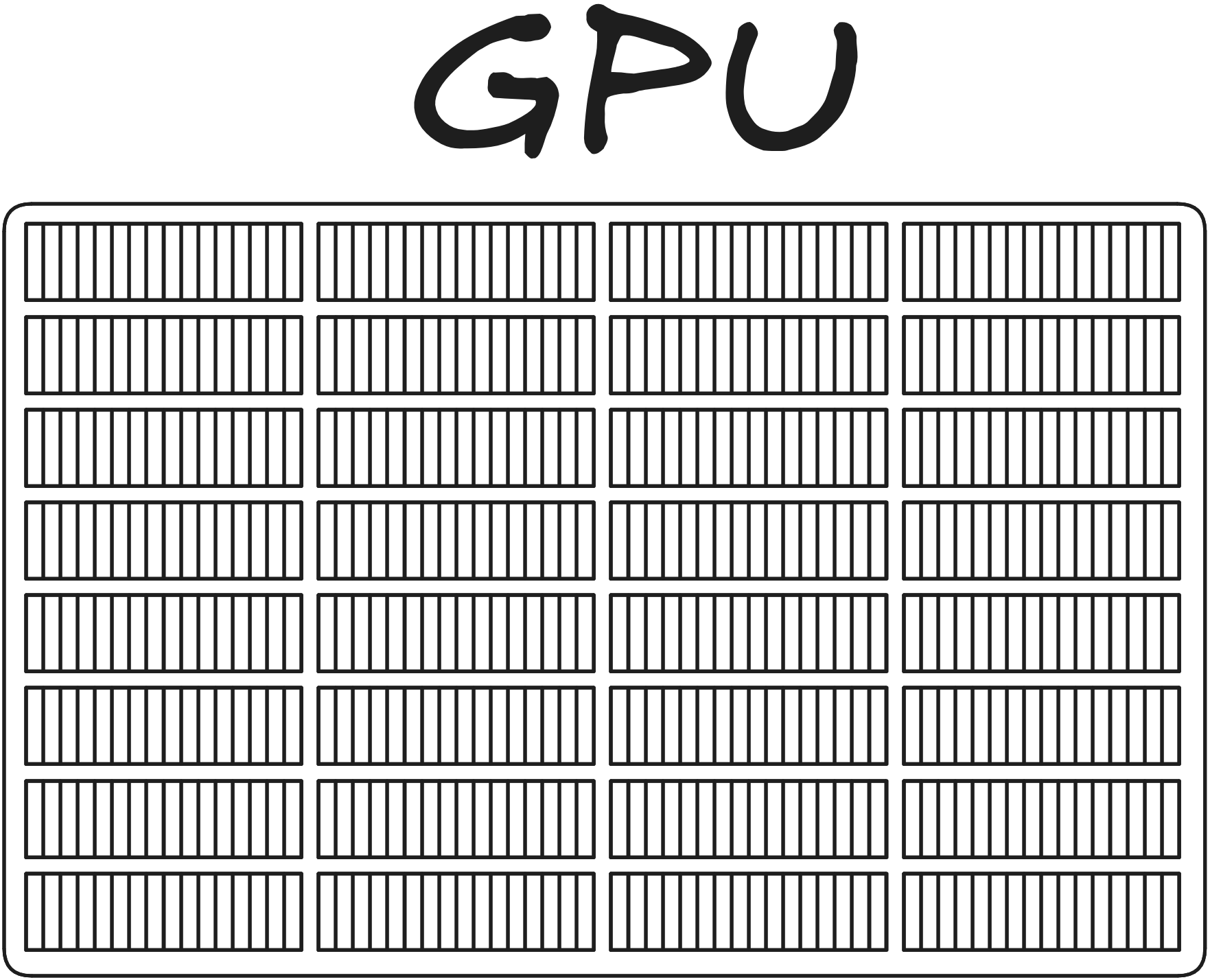

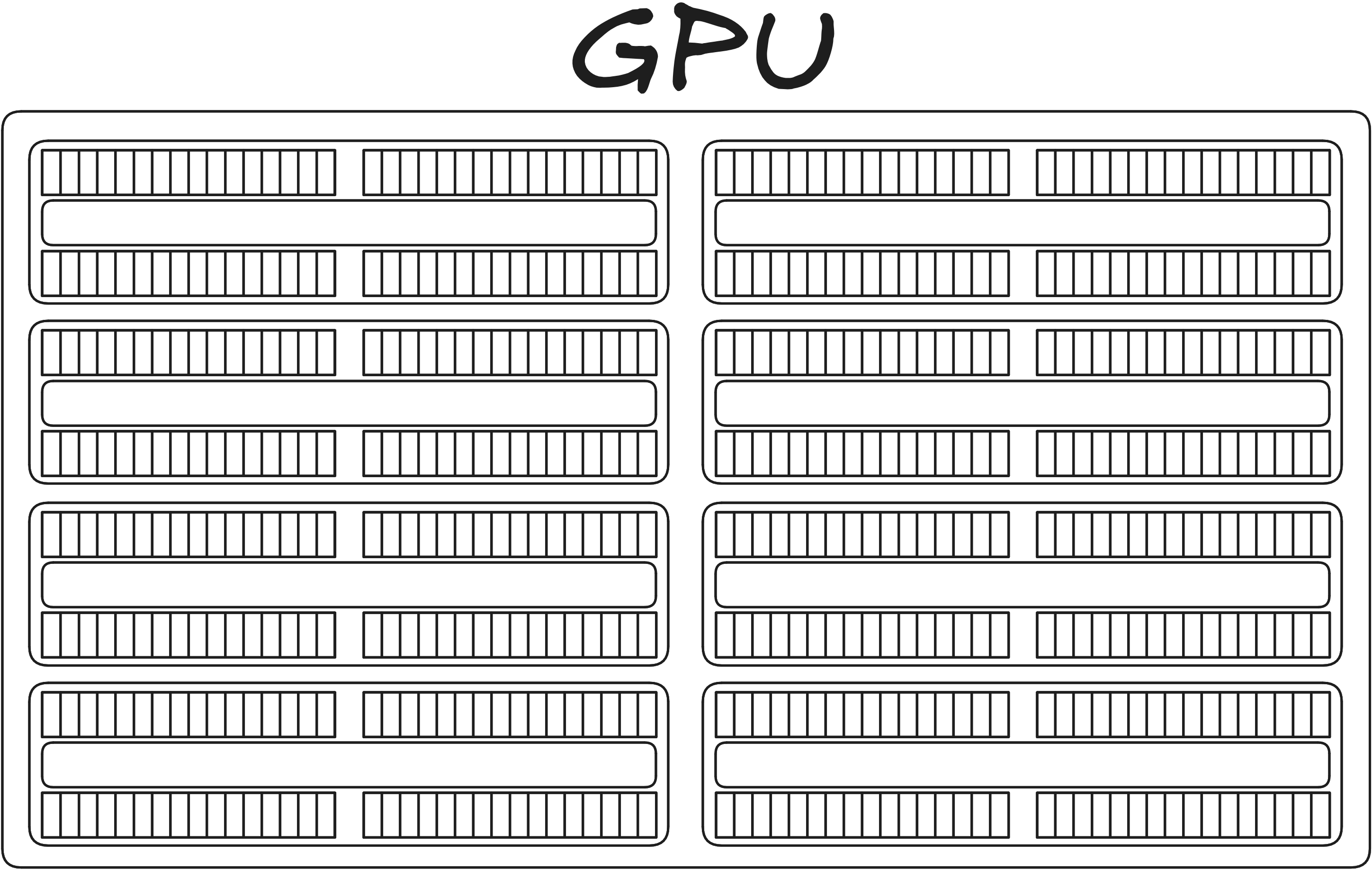

GPU as a collection of processors

Tens or hundreds of simple processors (this model has 8)

Tens or hundreds of simple processors (this model has 8)

- CU = Compute Unit (AMD)

- SM = Streaming Multiprocessor (Nvidia)

- EU = Execution Unit (Intel)

MI250X

- CUs

- memory

- links to other hardware

Image: LUMI consortium

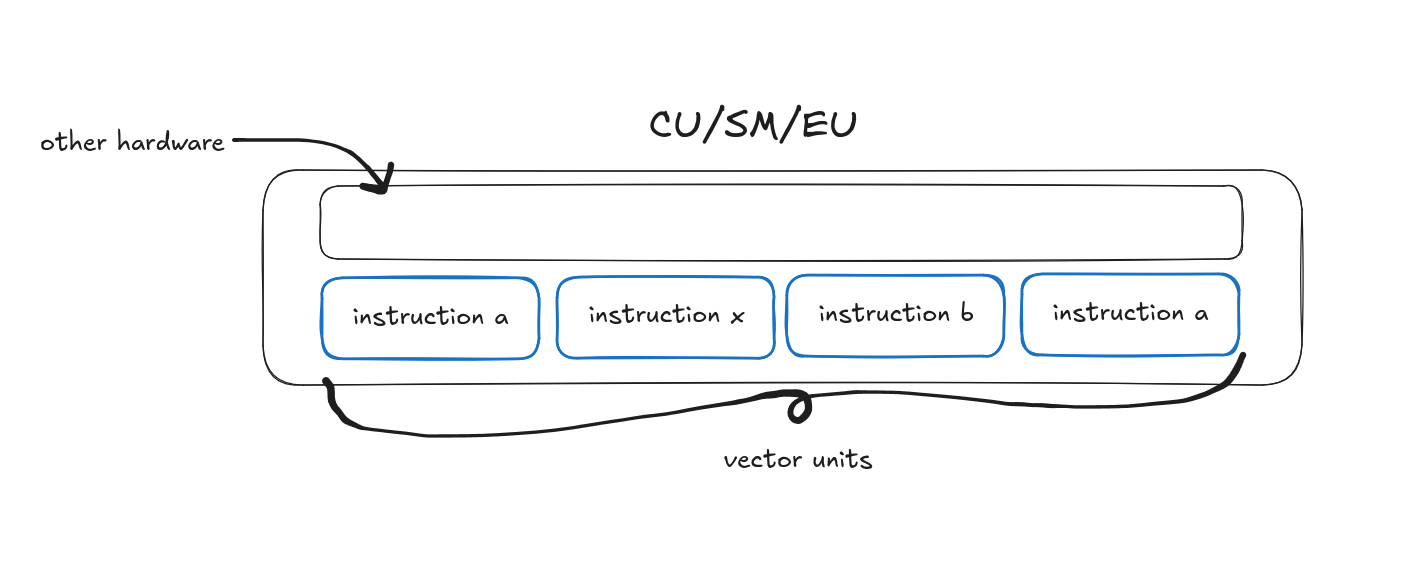

MI250X, Compute Unit

- 4 “SIMD-units”

- four sets of 16 SIMD lanes and matrix units

- local data share (LDS)

- L1 cache

- scheduler

- other hardware

Image: LUMI consortium

H100

- streaming multiprocessors (SM)

- memory

- links to other hardware

H100, Streaming Multiprocessor

- four SM sub-partitions (SMSP)

- cores for INT32, FP32, FP64

- \(\sim\) SIMD lanes

- L1 Cache (max 128 kB) / Shared memory (max 228 kB)

- other hardware (scheduler, instruction cache etc)

Recap

GPU

- is a massively parallel processor

- has (typically) dedicated memory space \(\longrightarrow\) (typically) explicit data movement

- has \(10^1 \dots 10^2\) of simple processors, multiple vector units per processor

- does \(10^1\dots 10^2\times\) more instructions per cycle than CPUs

Part 3: Programming GPUs

Programming GPUs

- SIMT = Single Instruction, Multiple Threads

- Write parallel code from the perspective of a single thread

Programming GPUs

- Context: Execute some code over the size of the arrays using a double loop

- Single thread point of view: Perform addition of two elements \(c_{ij} = a_{ij} + b_{ij}\)

Programming GPUs

Division of responsibilities

Our responsibility: how many threads, in what configuration

- 32768 threads in 1D

- 32 x 1024 threads in 2D

- 32 x 512 x 2 threads in 3D

Device’s responsibility: launch enough threads and call the supplied code for each

Thread hierarchy

Thread hierarchy, previous is the building block of the next level

- a thread

- a block of threads

- a grid of blocks

Thread

A single thread is the smallest unit

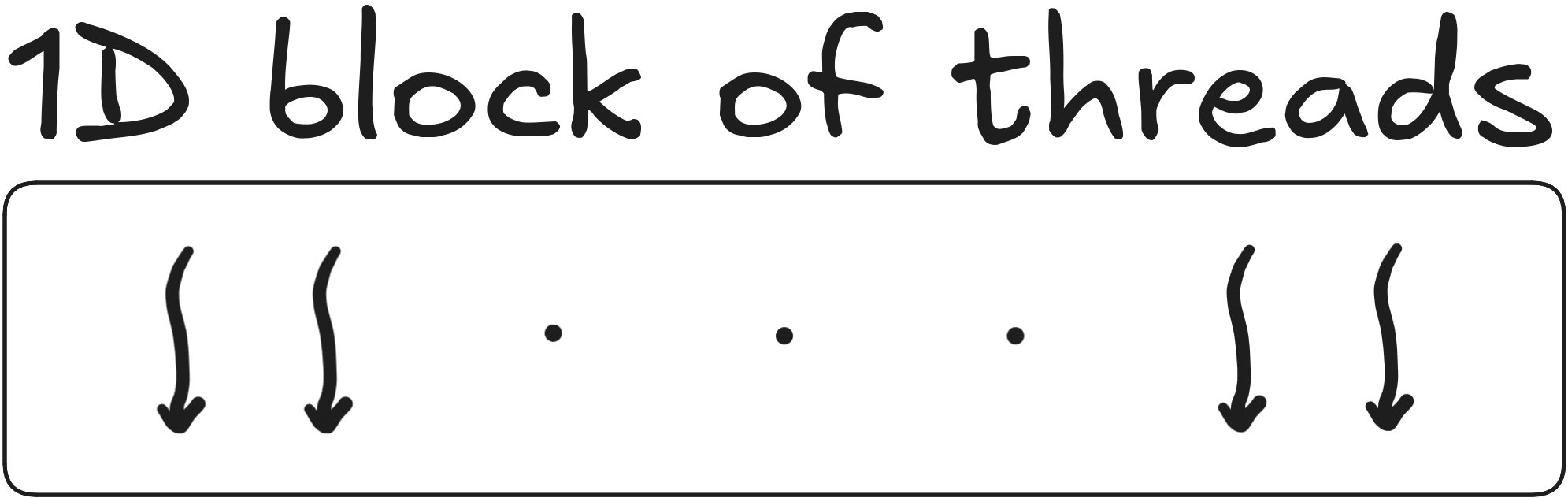

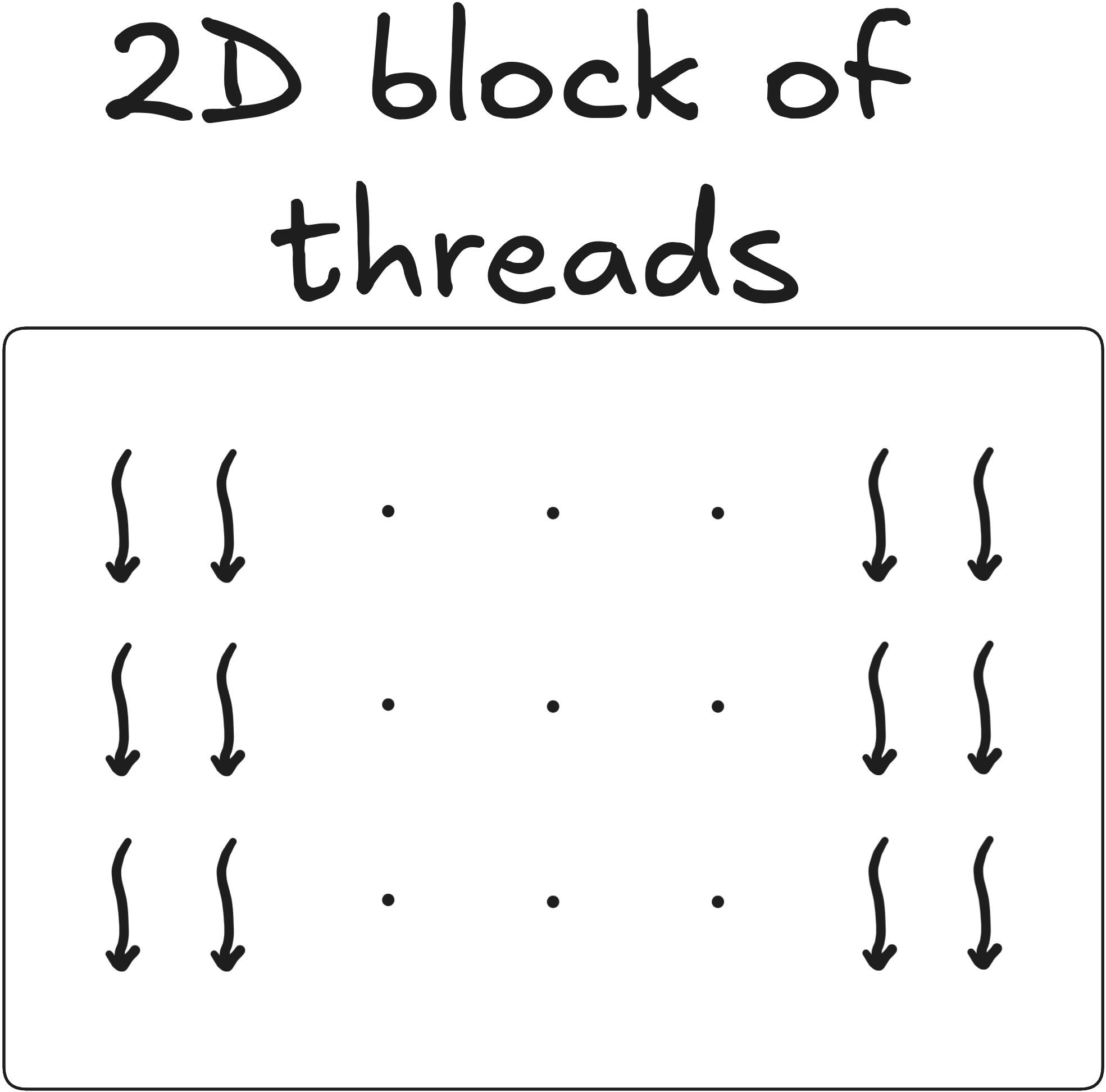

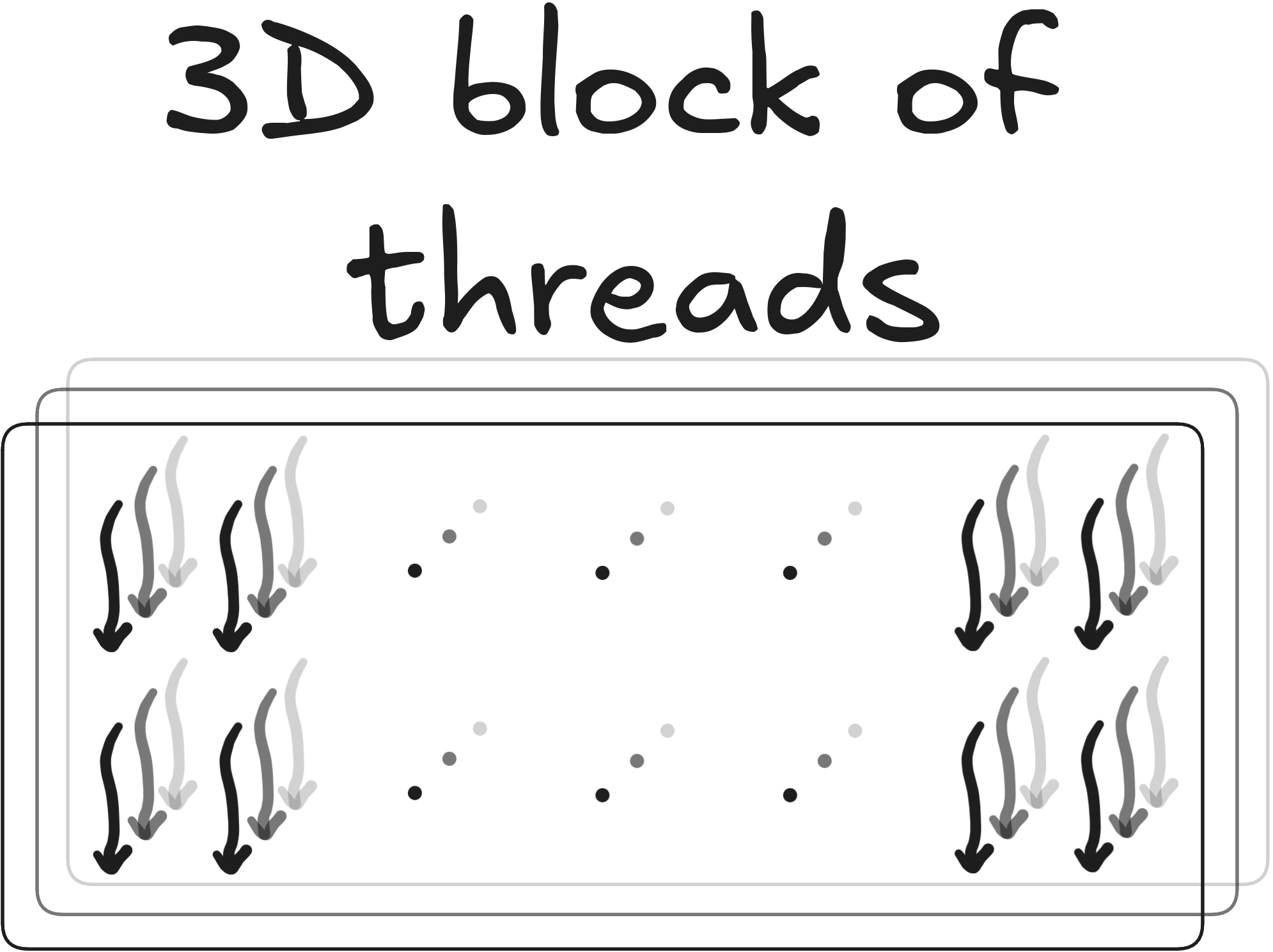

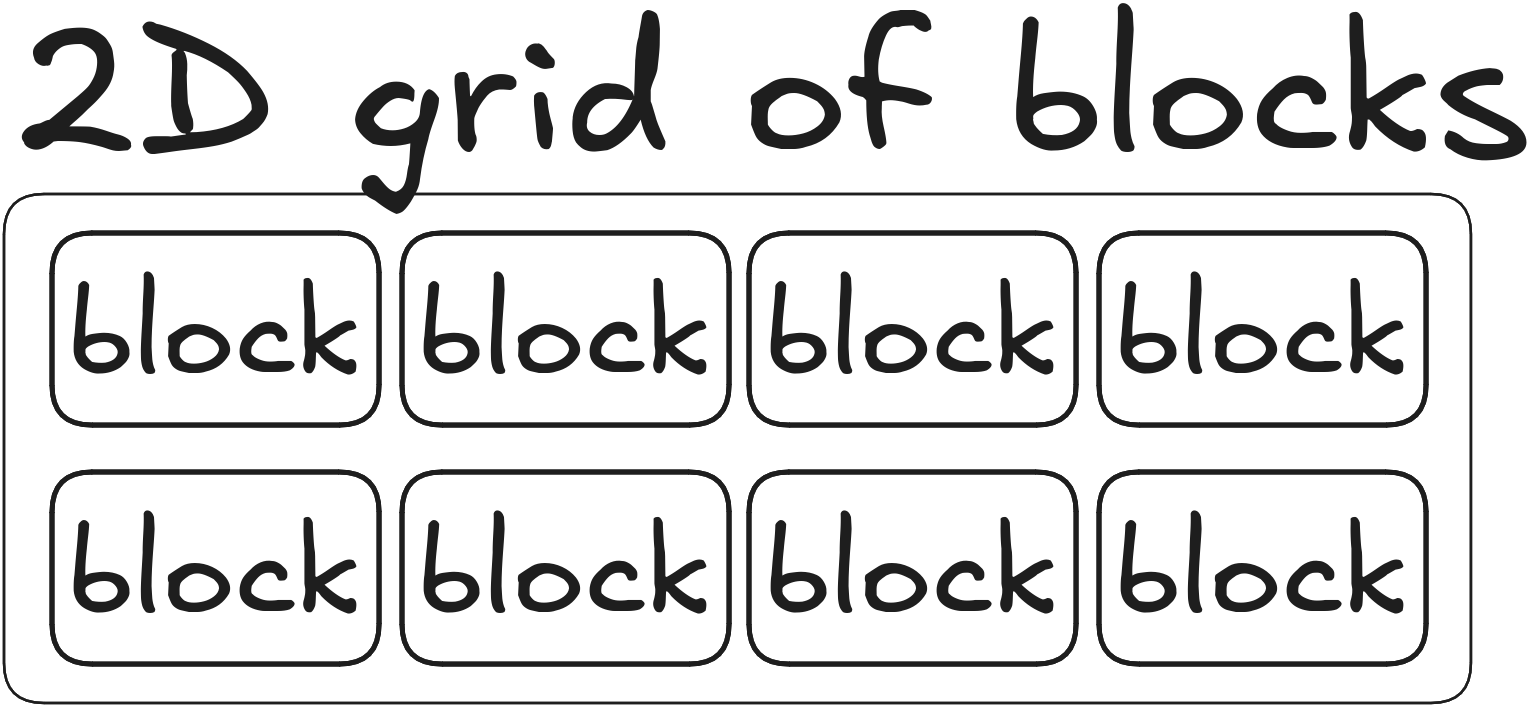

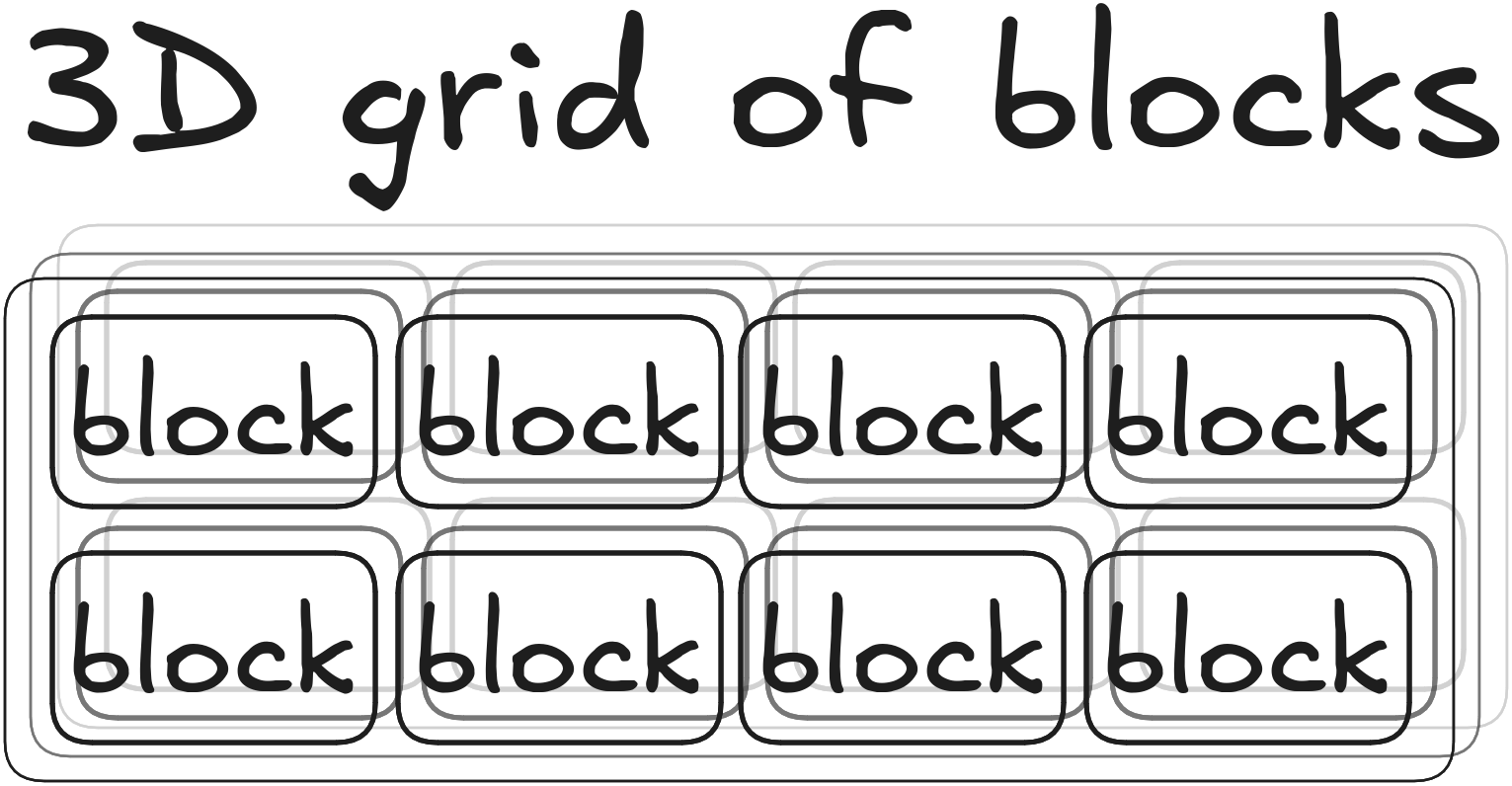

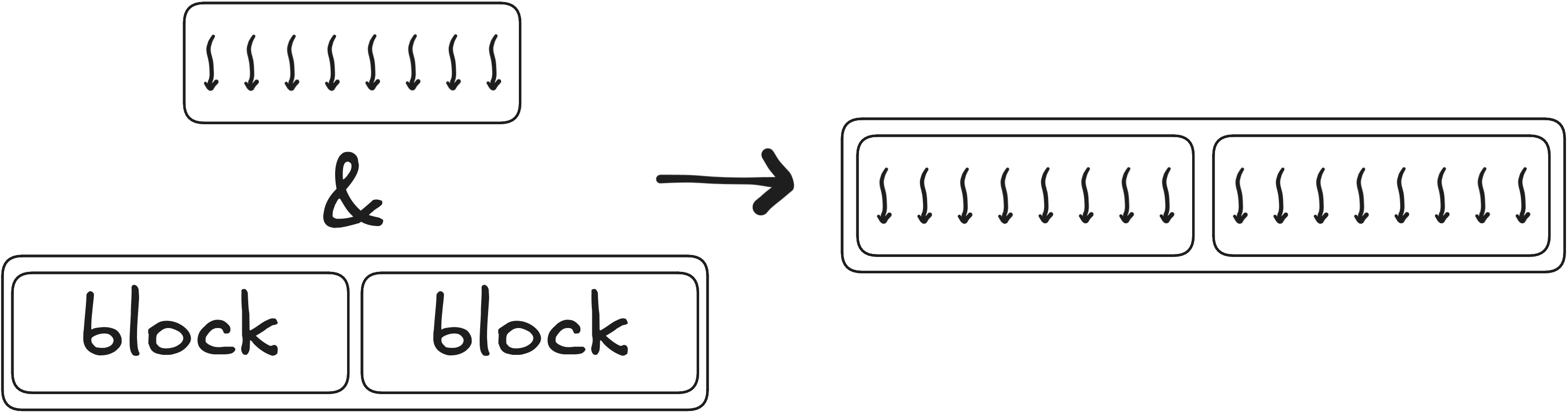

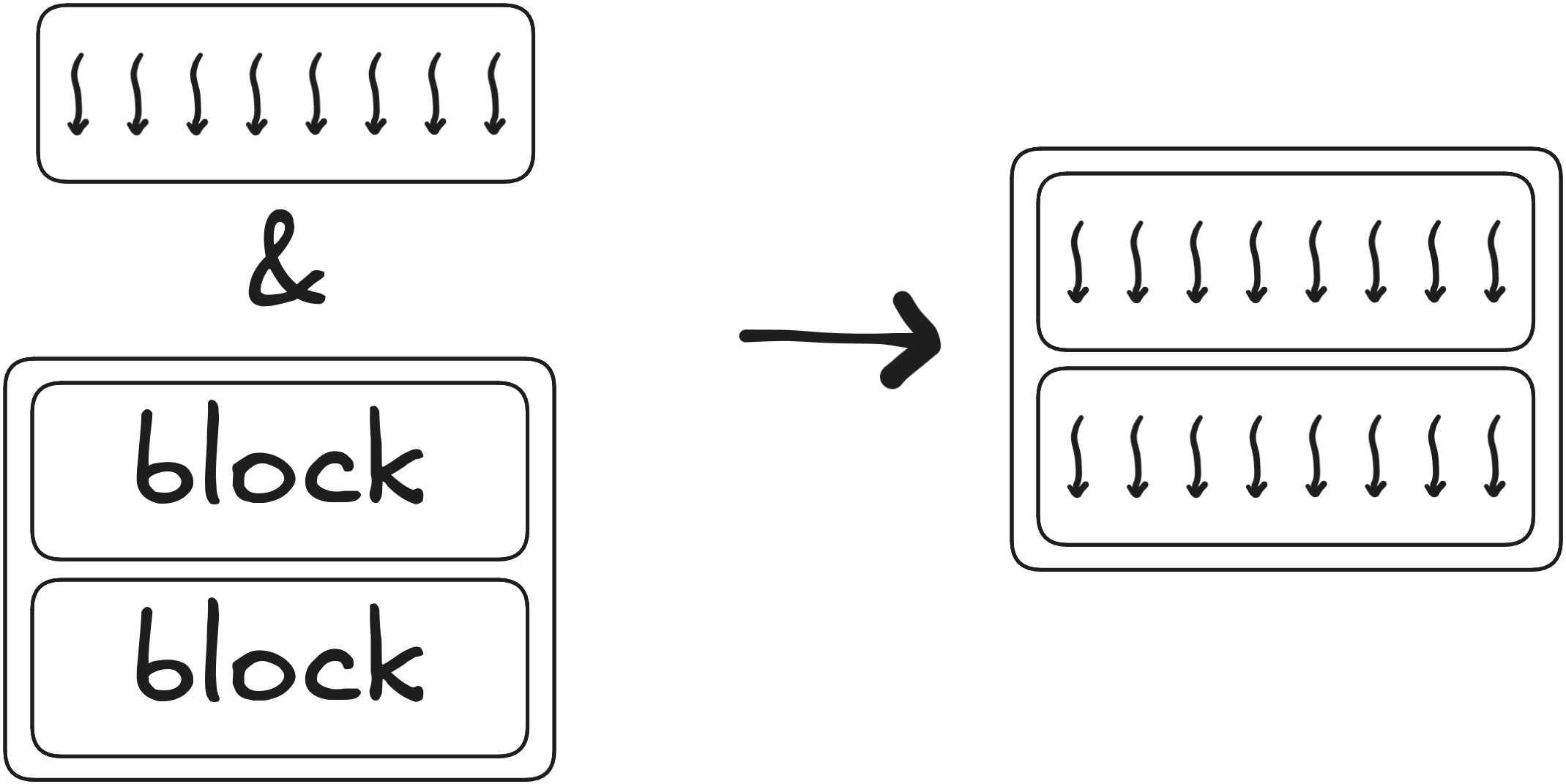

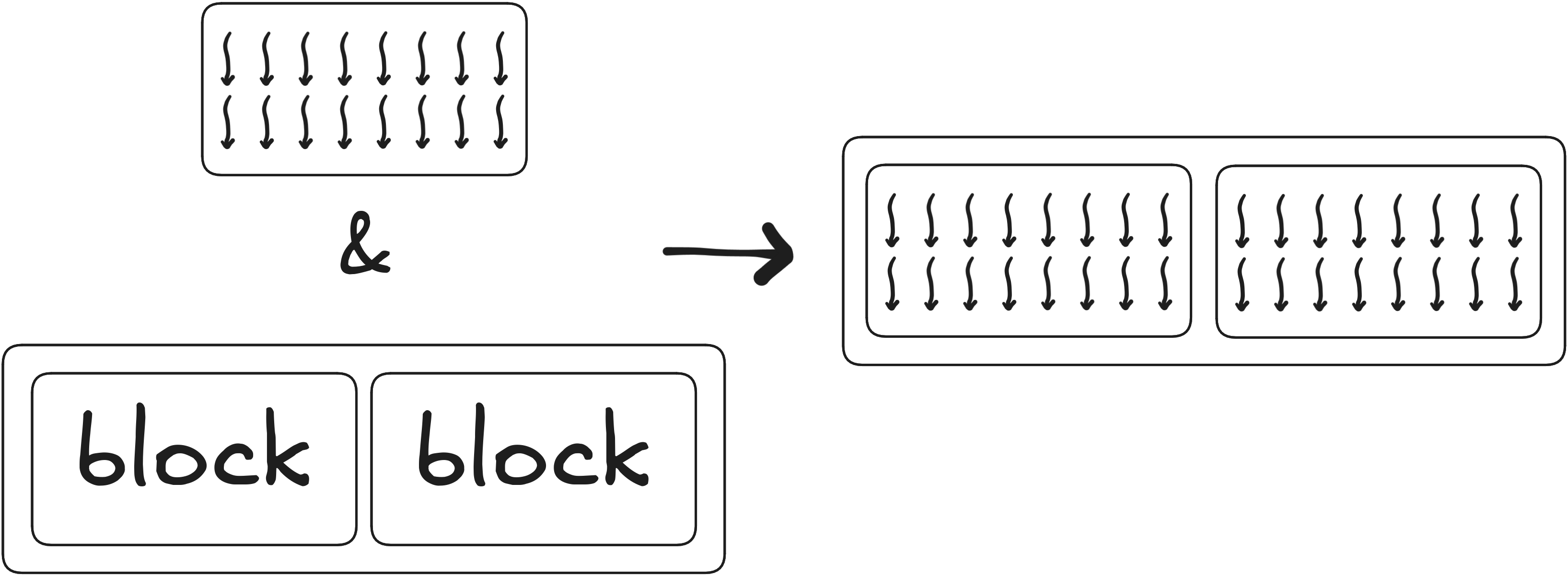

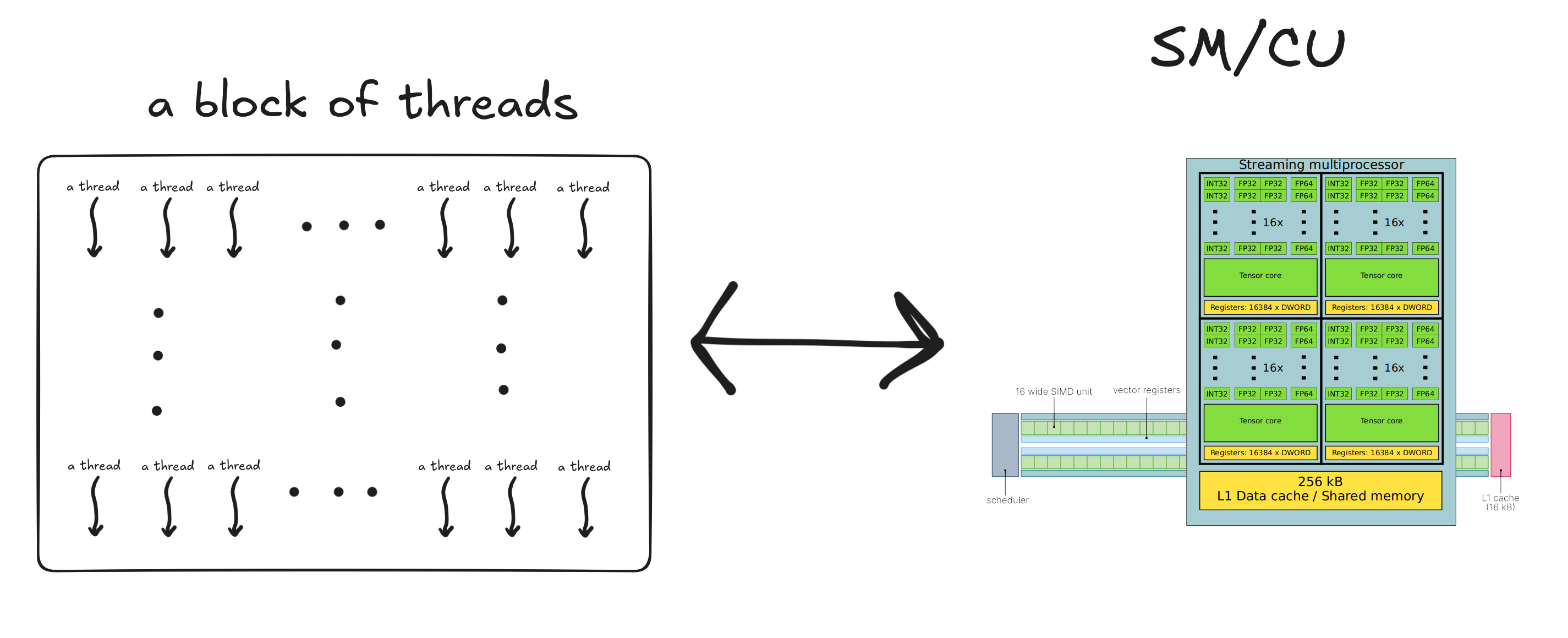

Block of threads

A block of threads can be 1D, 2D or 3D

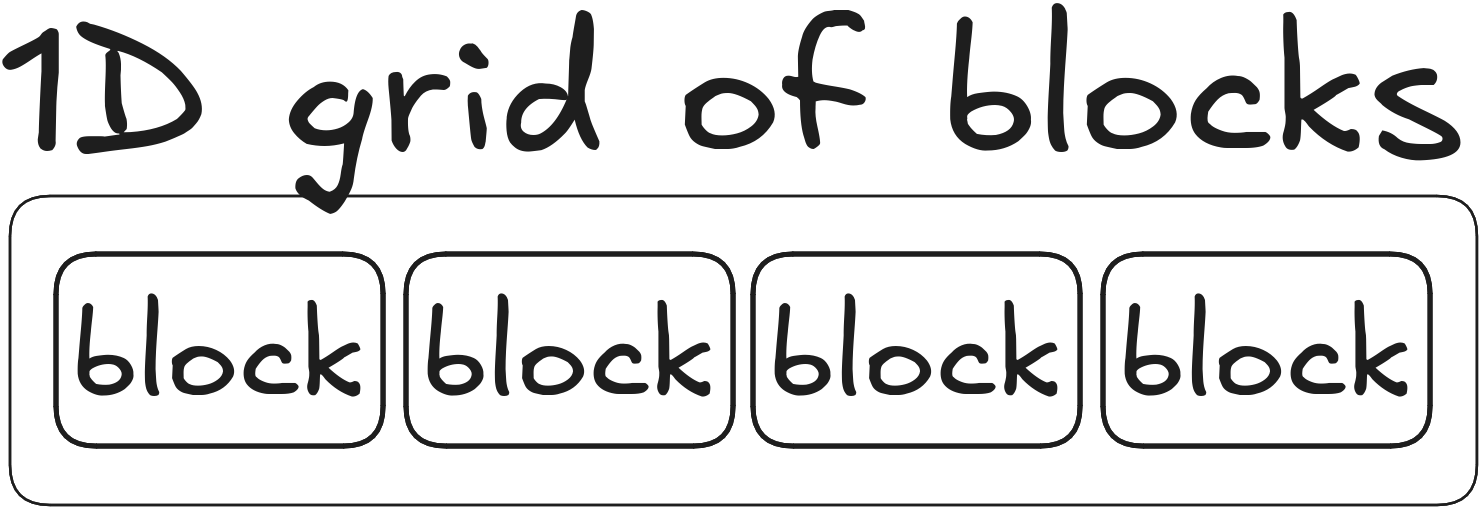

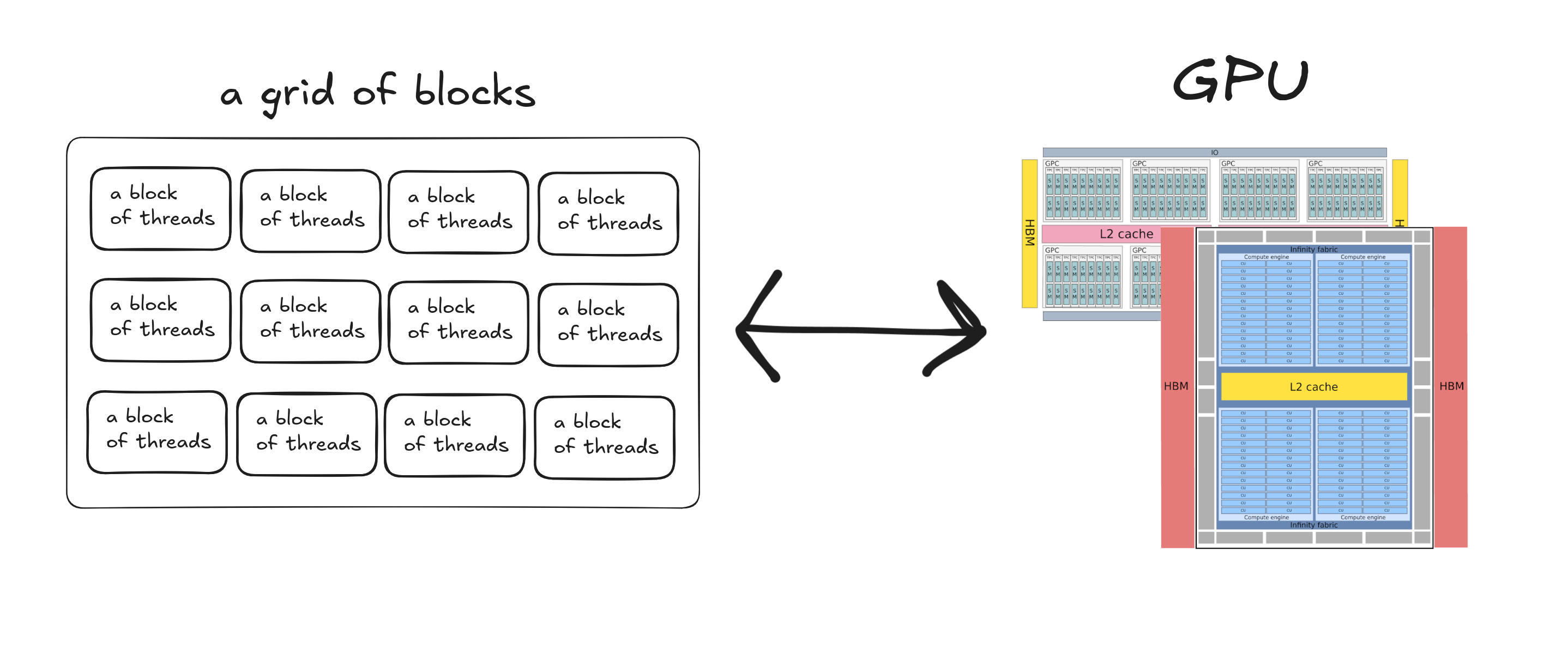

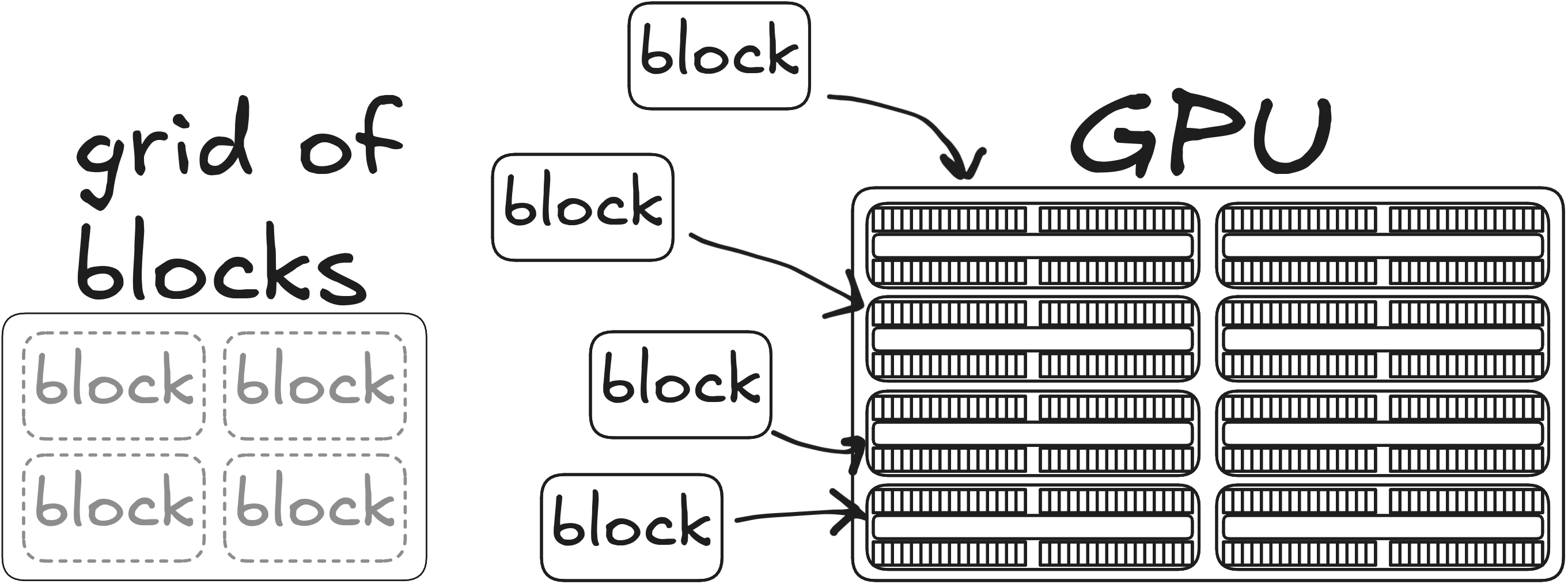

Grid of blocks

A grid of blocks can be 1D, 2D or 3D

Threads, blocks, grids

- threads/grid = threads/block \(\times\) blocks/grid

- device always operates over grids

Example grids 1

Example grids 2

Example grids 3

Part 4: Software – Hardware mapping

Grid – Device

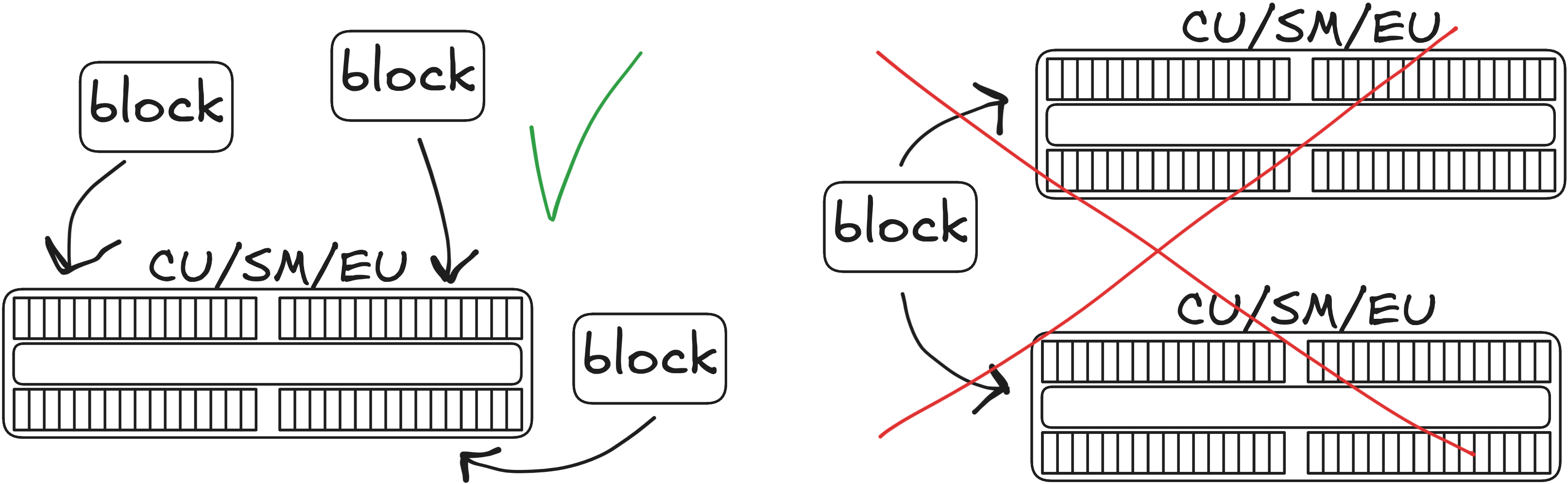

Block – SM/CU

Blocks – SM/CU

Block – SM/CU

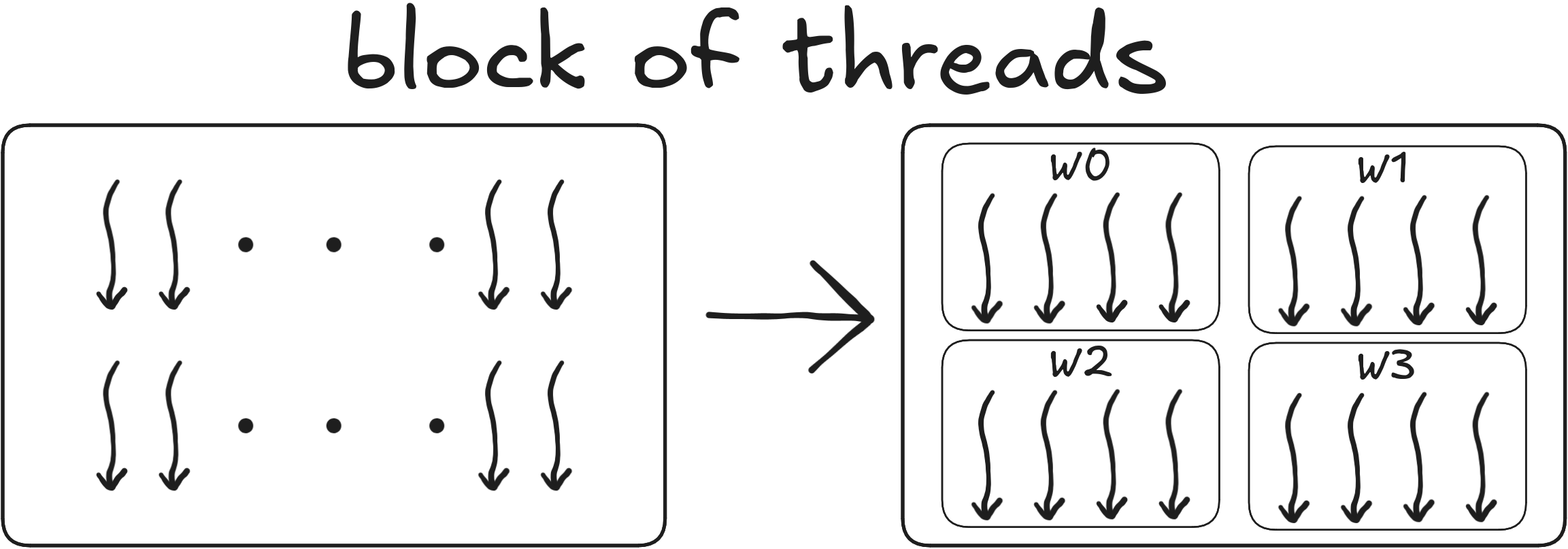

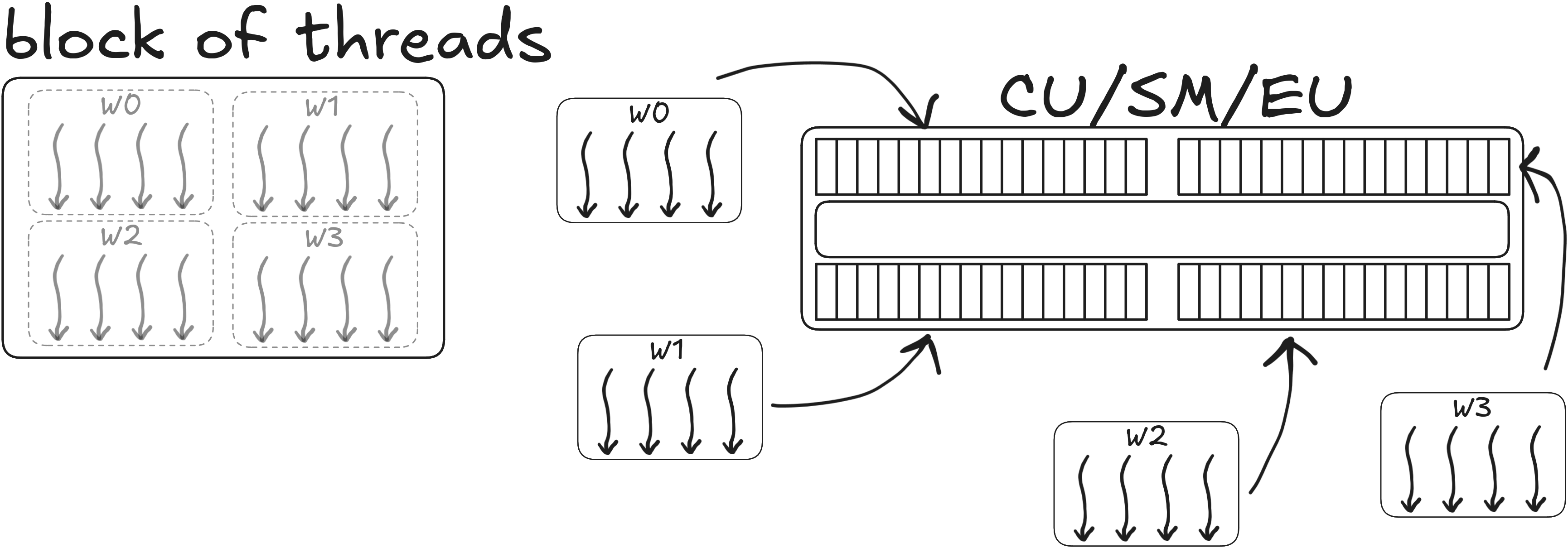

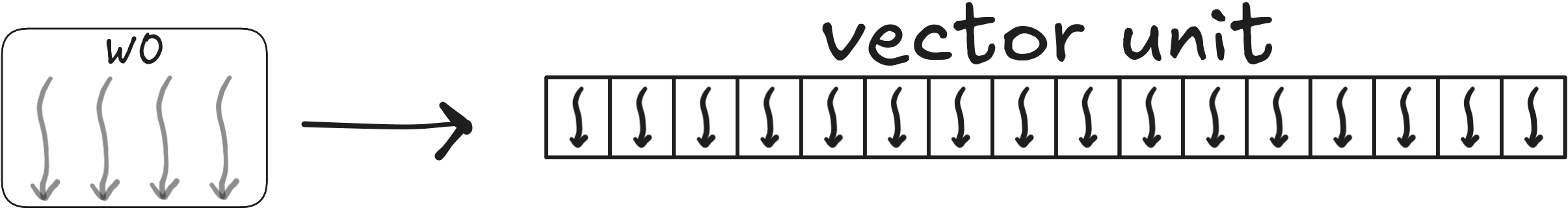

Warps, wavefronts

SM/CU breaks blocks of threads to

- warps of 32 consecutive threads (Nvidia), or

- wavefronts of 64 consecutive threads (AMD)

A 1D block of 256x1 threads gets partitioned to

| warp/wavefront ID | thread ID (Nvidia) | thread ID (AMD) |

|---|---|---|

| w0 | 0-31 | 0-63 |

| w1 | 32-63 | 64-127 |

| w2 | 64-95 | 128-191 |

| w3 | 96-127 | 192-255 |

| w4 | 128-159 | - |

| w5 | 160-191 | - |

| w6 | 192-223 | - |

| w7 | 224-255 | - |

Warps, wavefronts

Warp/Wavefront - SMSP/SIMD

Thread - lane

Recap

- massively parallel processor

- own memory space –> requires data movement

- useful when you have a lot of data

- consists of tens or hundreds of simple processors, with multiple vector units per processor

- 1-2 orders of magnitude more instruction per cycle compared to CPUs

Recap

- point of view of a single thread

- a grid of (blocks of) threads

- grid <–> device

- block <–> SM/CU

- warp/wavefront <–> SMSP/SIMD

- thread <–> lane

Questions?

Well I have couple

Assume GPU with 8 compute units, each with 4 SIMD-units of warp size 64.

What is the smallest number of blocks in a grid enough to utilize all compute units?

\((a)~ 1\quad (b)~ 32\quad (c)~ 8\)Assume there are 32 blocks. What is the minimum number of threads per block enough to utilize all of the hardware?

\((a)~ 64\quad (b)~ 256\quad (c)~ 32\)What brand of GPU is it based on terminology?