Streams, events, and synchronization

GPU programming with HIP

2026-05

CSC Training

Motivation

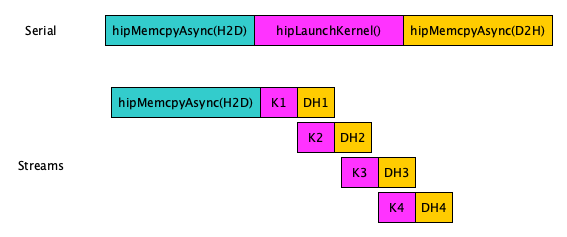

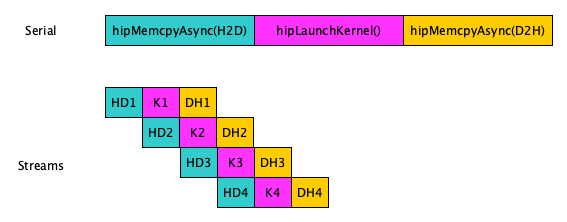

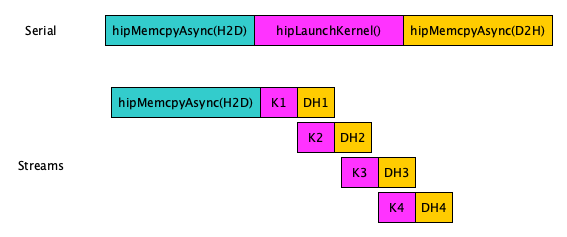

- So far we have learned a “serial” way of programming for GPUs

- Questions we now want to answer:

- Which operations could overlap?

- Could multiple kernels execute simultaneously?

- Could me move data between the host and device during kernel execution?

Outline

- Streams

- Events

- Synchronization

Outline

- Streams

- Kernels in different streams are asynchronous

- Kernels in same stream are executed in first-in-first-out order

- Execute Host-to-Device and Device-to-Host transfers concurrently with kernels

- Events

- Synchronize across streams and host

- Measure time

- Synchronization

- host ⇔ stream, host ⇔ event, host ⇔ device

- stream ⇔ event

- threads in block

What is a stream?

- A sequence (queue) of operations that execute in order on the GPU

- Operations in different streams may run concurrently

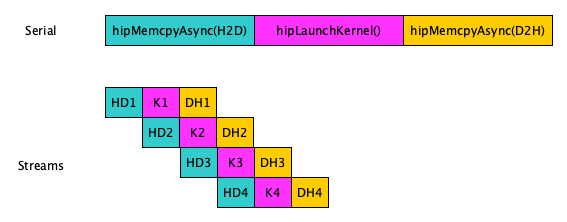

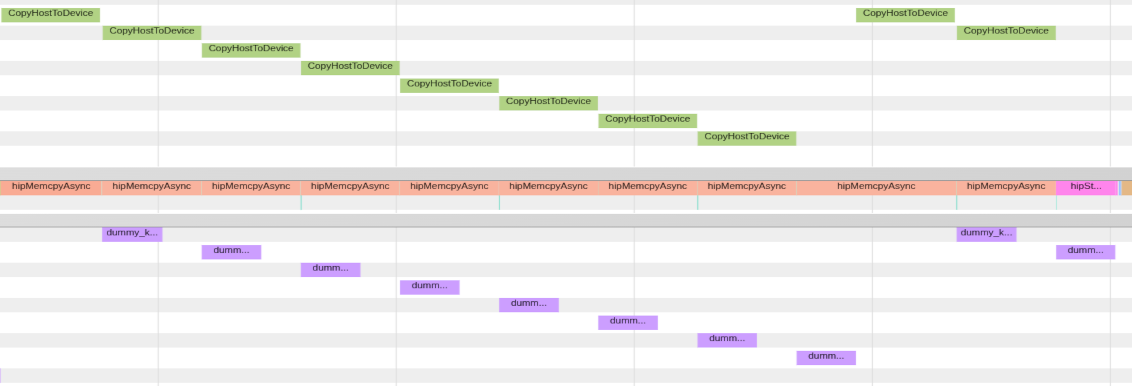

- H-to-D copy runs in a single stream, and the kernel and D-to-H copy are split into 4 streams

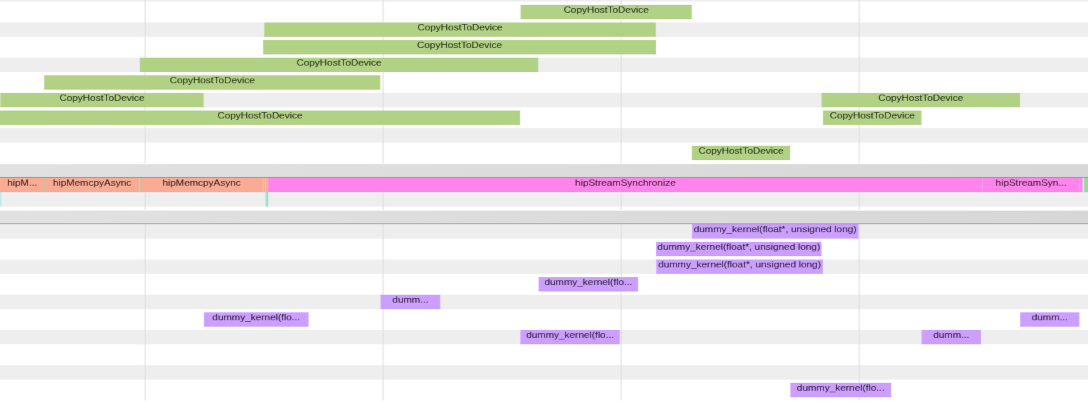

- H-to-D copy, kernel, and D-to-H copy are split into 4 streams

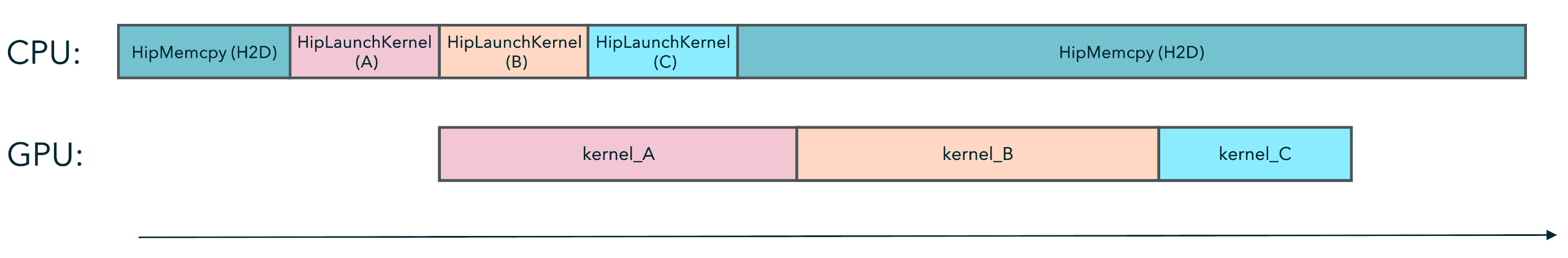

The default stream

- When you do not specify a stream to your kernel, it is sent to the

default stream

my_kernel<<<grid, block, 0, 0>>>(...)

- API functions operate on the default stream:

hipMalloc, hipMemcpy, hipFree, ...

- All operations (Malloc, Memcpy, Kernel execution) in the same stream

execute sequentially in submission order

- Hence, the sequential nature of what you have learnt so far

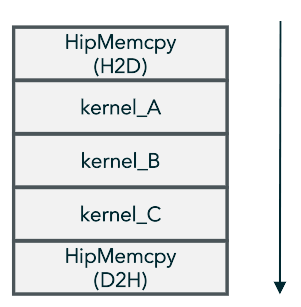

The order of execution in a stream

- Operations are sent to the stream and executed in a FIFO manner

Stream creation, synchronization, and destruction

- Declare a stream variable

- Create

stream

- Destroy

stream

Using multiple streams

- H-to-D copy runs in a single stream, and the kernel and D-to-H copy are split into 4 streams

- H-to-D copy, kernel, and D-to-H copy are split into 4 streams

Asynchronous operations and streams

- API functions operate on the default stream:

hipMalloc, hipMemcpy, hipFree, ... - Append

Asyncto name and addhipStream_tas last argument for asynchronous version:hipMalloc(...)⟶hipMallocAsync(..., hipStream_t stream)

Asynchronisity and kernels

- Running kernels concurrently require placing them in different

streams

- Default stream has special synchronization rules and cannot run concurrently with other streams (applies to all API calls)

- The stream is supplied to the kernel invocation:

my_kernel<<<grid, block, 0, stream>>>(...)hipLaunchKernelGGL(my_kernel, grid, block, 0, stream, ...)- Default stream:

my_kernel<<<grid, block, 0, 0>>>(...)

Stream example

hipStream_t stream[3];

for (int i = 0; i<3; ++i)

hipStreamCreate(&stream[i]);

for (int i = 0; i < 3; ++i) {

hipMemcpyAsync(d_data[i], h_data[i], bytes,

hipMemcpyHostToDevice, stream[i]);

hipkernel<<<grid, block, 0, stream[i]>>>

(d_data[i], i);

hipMemcpyAsync(h_data[i], d_data[i], bytes,

hipMemcpyDeviceToHost, stream[i]);

}

for(int i = 0; i<3; ++i) {

hipStreamSynchronize(stream[i]);

hipStreamDestroy(stream[i]); }Memory caveat:

- Host memory needs to be page-locked, otherwise memory copies are synchronous

Async memory copy with regular vs page-locked memory

Summary before moving to events

- When you do not specify a stream to your kernel, it is sent to the default stream

- Operations in a stream execute in a FIFO manner

- Multiple streams can execute concurrently on the same GPU

Events

- Synchronize other streams/host with events

- Measure time between events

- HIP API Documentation

Why events?

- Cut stream to fragments

- Useful for inter-stream synchronization and timing asynchronous events

- Events have a boolean state: occurred / not occurred

- Query with

hipError_t hipEventQuery(hipEvent_t event):hipSuccess/hipErrorNotReady

- Query with

Measure how fast host places tasks to stream:

// Start timed GPU kernel

clock_t start_kernel_clock = clock();

kernel<<<gridsize, blocksize, 0, stream>>>(d_a, n_total);

// Start timed device-to-host memcopy

clock_t start_d2h_clock = clock();

hipMemcpyAsync(a, d_a, bytes, hipMemcpyDeviceToHost, stream);

// Stop timing

clock_t stop_clock = clock();

hipStreamSynchronize(stream);Measure duration of tasks on GPU:

// Start timed GPU kernel

hipEventRecord(start_kernel_event, stream);

kernel<<<gridsize, blocksize, 0, stream>>>(d_a, n_total);

// Start timed device-to-host memcopy

hipEventRecord(start_d2h_event, stream);

hipMemcpyAsync(a, d_a, bytes, hipMemcpyDeviceToHost, stream);

// Stop timing

hipEventRecord(stop_event, stream);

hipEventSynchronize(stop_event);Events: Central API calls

| Description | API call |

|---|---|

Initialize event object |

hipEventCreate(hipEvent_t* event) |

Record an event in the stream |

hipEventRecord(hipEvent_t event, hipStream_t stream) |

Elapsed time (ms) between start and

end |

hipEventElapsedTime(float* ms, hipEvent_t start, hipEvent_t end) |

Make stream wait for event |

hipStreamWaitEvent(hipStream_t stream, hipEvent_t event, unsigned int flags = 0) |

Wait for event to complete |

hipEventSynchronize(hipEvent_t event) |

Destroy event object |

hipEventDestroy(hipEvent_t event) |

- All of the above return

hipError_t

Lastly, synchronization

- GPU operations are asynchronous with respect to the host

- The CPU may continue executing while kernels are still running

- Memory copies and kernels may overlap

- Synchronization is needed when:

- The host (CPU) needs results produced by the GPU

- One operation depends on another operation completing

- Without synchronization:

- The CPU may access incomplete data

- Operations may execute in the wrong order

Central synchronization API calls

- Synchronize the host with a specific stream

- Synchronize the host with a specific event

- Synchronize a specific stream with a specific event (the event can be in another stream)

- Synchronize the host with the whole device (wait until all device tasks are finished)

- In-kernel blockwise synchronization across threads (not between host/device)

Summary

- Streams provide a mechanism to compute tasks on the GPU concurrently

- Events provide a mechanism to signal when

operations have occurred in a stream

- Good for inter-stream sychronization and timing events

- Many host/device synchronizations functions for

different purposes

- The device function

__syncthreads()is only for in-kernel synchronization between threads in a same block (does not synch threads across blocks)

- The device function